nanosaur

🦕 Nanosaur is an open-source project designed and made by Raffaello Bonghi.

Nanosaur is a simple open-source robot based on NVIDIA Jetson. This robot is fully 3D printable, able to wander on your desk autonomously, and use a simple camera and two OLEDs — these act as a pair of eyes. The size is only 10x12x6cm and it weighs only 500g. Nanosaur is designed and made to run on ROS2.

Nanosaur is a simple open-source robot based on NVIDIA Jetson. This robot is fully 3D printable, able to wander on your desk autonomously, and use a simple camera and two OLEDs — these act as a pair of eyes. The size is only 10x12x6cm and it weighs only 500g. Nanosaur is designed and made to run on ROS2.

Make & Install

🦕 Nanosaur is simple and does not need enough time to wandering on your desktop. If your are making from scratch Nanosaur you need to follow this guide in order, starting from

If you are missing some parts or you need an help, you can join to the official nanosaur Discord community.

Architecture

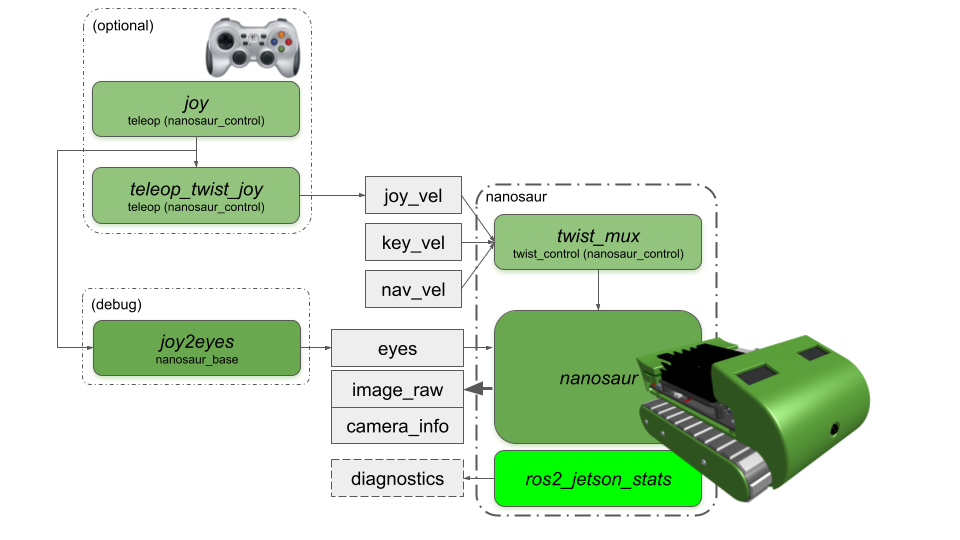

When you switch on your nanosaur, the ROS2 communication appears summarized like the picture below.

The joystick block (optional) node appears if you plug a joystick on the NVIDIA Jetson.

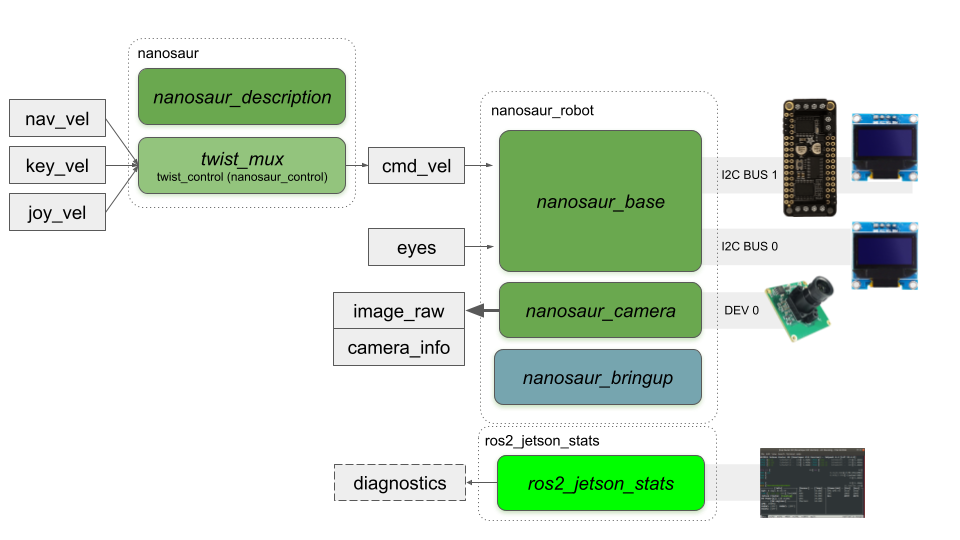

Nodes

Nanosaur has many nodes to drive and show the status of the robot. Below all nodes are arranged by package.

nanosaur_base

nanosaur_base enables the motor controller and the displays.

joy2eyes converts a joystick message to an eyes topic. This node is functional when you want to test the eyes topic.

nanosaur_camera

nanosaur_camera runs the camera streamer from the MIPI camera to a ROS2 topic.

Below is the usual ROS2 graph when you start up Nanosaur.

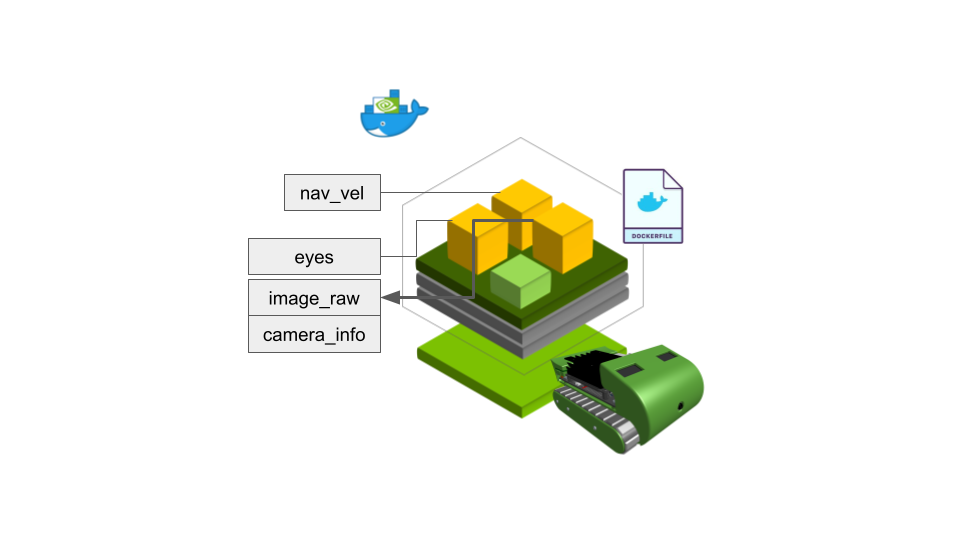

Docker

Nanosaur is released starting with the NVIDIA Jetson ROS foxy docker image. There is also support for ROS2 Galactic, ROS2 Eloquent and ROS Melodic and ROS Noetic with AI frameworks such as PyTorch, NVIDIA TensorRT and DeepStream SDK.

The ROS2 foxy is compiled and used in the “nanosaur_camera” with the jetson-utils to speed up camera access.

When Nanosaur is running, there are a set of topics available, such as the image_raw topic, the eyes topic to move the eyes drawn on the display and the navigation command to drive the robot.

License

🦕 Nanosaur open-source project is under license:

Design and project - Attribution-NonCommercial-ShareAlike 4.0 International (CC BY-NC-SA 4.0)

All PCB boards - Attribution-NonCommercial-ShareAlike 4.0 International (CC BY-NC-SA 4.0)

Website - Attribution-NonCommercial-ShareAlike 4.0 International (CC BY-NC-SA 4.0)

Source Code - MIT License

Reference

🦕 Website: nanosaur.ai

🦄 Do you need an help? Discord

🧰 For technical details follow wiki

🐳 Nanosaur Docker hub

⁉️ Something wrong? Open an issue

🍕 nanosaur is part of pizzarobotics.org