THE canonical_scan_matcher PACKAGE IS DISCONTINUED

the package has been renamed to laser_scan_matcher and has been updated with additional features.

The information below be preserved for a while while people switch.

Details

The canonical_scan_matcher package is a wrapper around Andrea Censi's Canonical Scan Matcher [1] implementation. The package allows to scan match between consecutive sensor_msgs/LaserScan messages, and publish the estimated position of the laser as a geometry_msgs/Pose2D or a tf transform.

The package is intended to be used without any odometry estimation provided by other sensors. Thus, it can serve as a stand-alone odometry estimator.

An estimation for theta can optionally be provided to improve accuracy, in the form of a sensor_msgs/Imu. This message would typically be published by an IMU or other angular rate sensor.

Alternatively, an estimation for x, y, and theta can optionally be provided to improve accuracy, in the form of a tf transform. This transform would typically be published by an odometry system. This has not yet been tested.

Example

You can run the canonical_scan_matcher on a pre-recorded bag file that comes with the package. First, make sure you have the scan_tools stack downloaded and installed by following the instructions here.

Next, make sure you have the necessary tools installed:

1 rosmake rviz rosbag tf

Finally, run the demo:

1 roslaunch canonical_scan_matcher demo.launch

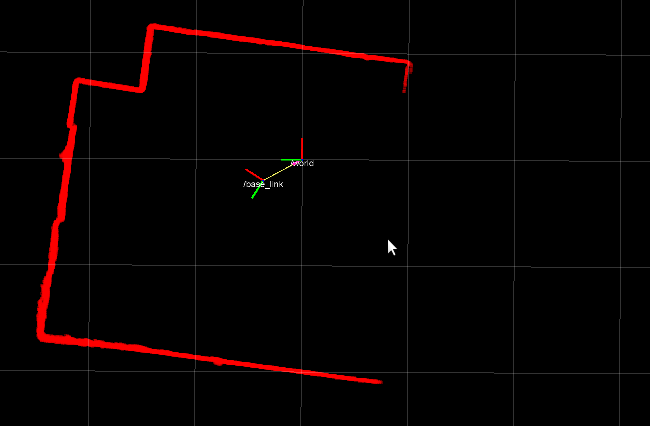

You should see a result similar to the video below. The video shows CSM tracking the position of a Hokuyo laser as it is being carried freely around a room. The pose is determined entirely by the scan matcher - no additional odometry is provided.

Nodes

csm_node

The csm_node takes in sensor_msgs/LaserScan messages, performs scan registration, and outputs an estimate for the displacement of the robot.Subscribed Topics

scan (sensor_msgs/LaserScan)- Scans from a laser range-finder

- Imu messages, used for theta estimation. Only used if odometry_type is set to imu.

Published Topics

pose2D (geometry_msgs/Pose2D)- The pose of the base frame, in some fixed (world) frame.

Parameters

~world_frame (string, default: "world")- the fixed frame

- the base frame of the robot

- whether to publish scan matcher's estimation for the position of the base frame in the world frame as a transform. Disable this if some other node is already publishing an odometric estimation.

- whether to publish scan matcher's estimation for the position of the base frame in the world frame as a geometry_msgs/Pose2D

- If "none", the scan matcher will not use any estimate for the displacement between scans. If "imu", the matcher will use as an estimate the yaw value of sensor_msgs/Imu messages published on a topic called "imu". If "tf", the matcher will use as an estimate the x, y, and yaw value of a tf transform between the world and base frames.

- Maximum angular displacement between scans, in degrees

- Maximum translation between scans (m)

- Maximum ICP cycle iterations

- A threshold for stopping (m)

- A threshold for stopping (rad)

- Maximum distance for a correspondence to be valid

- Noise in the scan (m) (Not sure if changing this has any effect in the current implementation)

- If 1, use smart tricks for finding correspondences (see paper).

- Restart: If 1, restart if error is over threshold

- Restart: Threshold for restarting

- Restart: displacement for restarting. (m)

- Restart: displacement for restarting. (rad)

- Max distance for staying in the same clustering

- Number of neighbour rays used to estimate the orientation

- If 0, it's vanilla ICP

- If 1, discard correspondences based on the angles

- Discard correspondences based on the angles - threshold angle, in degrees

- Percentage of correspondences to consider: if 0.90, always discard the top 10% of correspondences with more error

- Parameters describing a simple adaptive algorithm for discarding. 1) Order the errors. 2) Choose the percentile according to outliers_adaptive_order (if it is 0.7, get the 70% percentile). 3) Define an adaptive threshold multiplying outliers_adaptive_mult with the value of the error at the chosen percentile. 4) Discard correspondences over the threshold. This is useful to be conservative; yet remove the biggest errors.

- Parameters describing a simple adaptive algorithm for discarding. 1) Order the errors. 2) Choose the percentile according to outliers_adaptive_order (if it is 0.7, get the 70% percentile). 3) Define an adaptive threshold multiplying outliers_adaptive_mult with the value of the error at the chosen percentile. 4) Discard correspondences over the threshold. This is useful to be conservative; yet remove the biggest errors.

- If you already have a guess of the solution, you can compute the polar angle of the points of one scan in the new position. If the polar angle is not a monotone function of the readings index, it means that the surface is not visible in the next position. If it is not visible, then we don't use it for matching.

- If 1, no two points in laser_sens can have the same correspondence

- If 1, computes the covariance of ICP using the method http://purl.org/censi/2006/icpcov (Changing this has no effect in the current implementation)

- If 1, checks that find_correspondences_tricks gives the right answer

- If 1, the field 'true_alpha' (or 'alpha') in the first scan is used to compute the incidence beta, and the factor (1/cos^2(beta)) used to weight the correspondence. (Changing this has no effect in the current implementation)

- If 1, the field 'readings_sigma' in the second scan is used to weight the correspondence by 1/sigma^2 (Not sure if changing this has any effect in the current implementation)

Required tf Transforms

base_link → laser- the pose of the laser in the base frame. Only needed when use_odometry is enabled.

Provided tf Transforms

world → base_link- the pose of the robot base in the world frame. Only provided when publish_tf is enabled.

References

[1] A. Censi, "An ICP variant using a point-to-line metric" Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2008

Bug Reports & Feature Requests

We appreciate the time and effort spent submitting bug reports.

Please use our Trac to report bugs or request features.