Overview

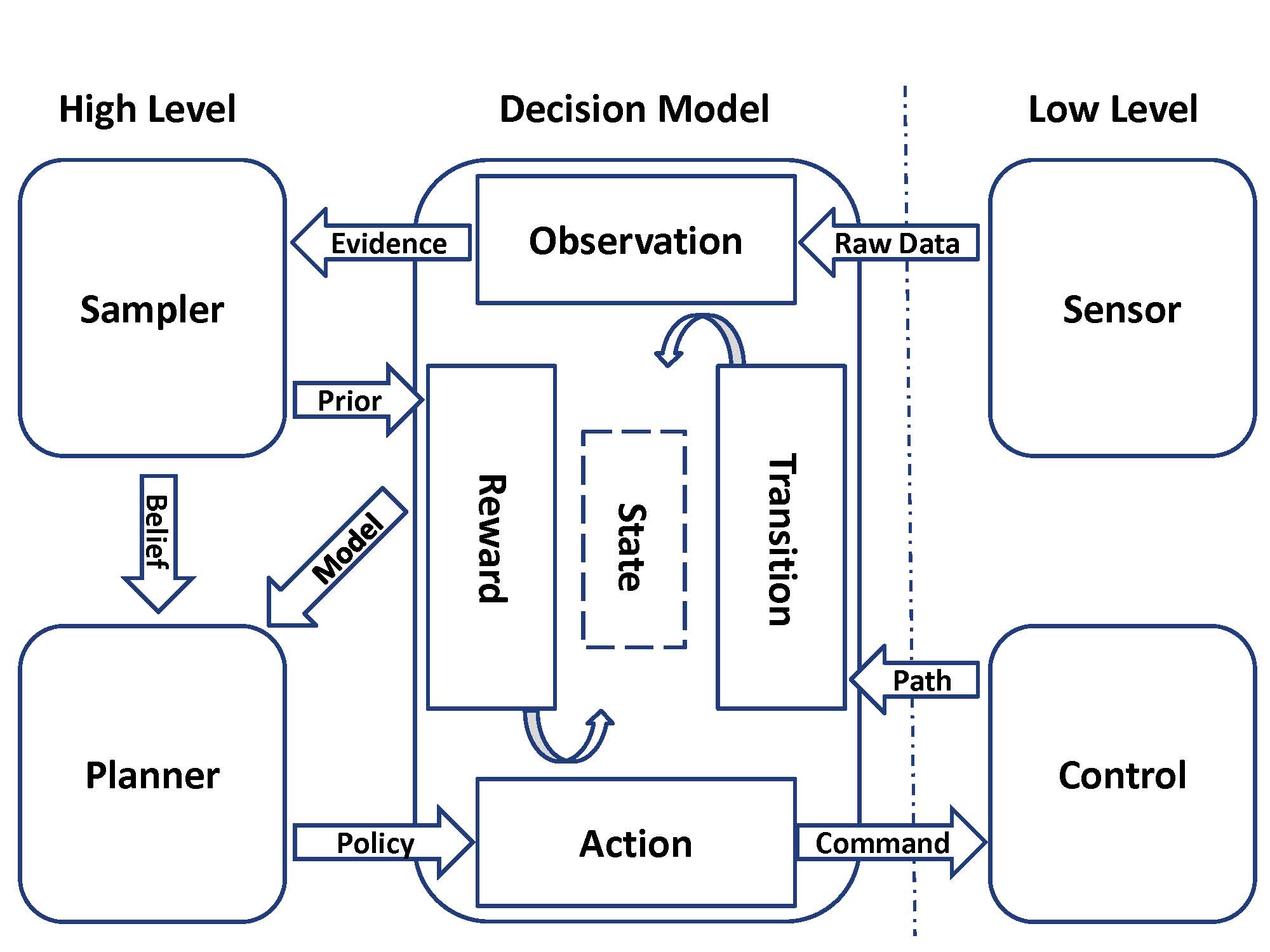

The find_object stack is a set of packages for planning, execution, and perception for the task of searching for specific objects in a cluttered environment. Personal mobile robots such as PR2 often have many sensors (stereo cameras, laser range finder, etc.). The motivated goal is to combine information from different sensors and actively plan when to sense and act. The system consists of:

An action manager for moving the base, as well as perceptual actions for detecting objects with varying accuracies and field of view. More precisely, it implements narrow sense, wide sense, object_manipulator, move using the existing ros packages. Currently, all these actions are only tested on the PR2 robot.

A planner that chooses among the navigation, manipulation, and perception actions at each step. The planner maintains a belief state about the robot's current knowledge of the environment and finds the most useful sensing action in the belief space. This allows us to evaluate the tradeoffs of different sensing, navigation and manipulation actions. The planner is efficient and runs online on PR2.

A simple executive that calls the planner and commands the actions.

The basic framework of the system is shown as below:

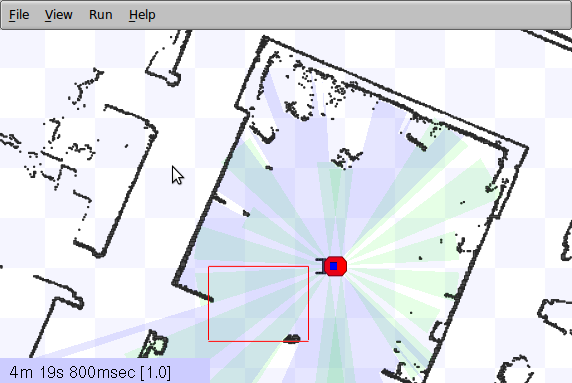

The stack also includes an extension to the stage simulator that exposes additional features such as a gripper.

For more information, see the tutorial or the individual package pages.

Report a Bug

<<TracLink(REPO COMPONENT)>>