| |

Learning Kinematic Models for Articulated Objects using a Webcam

Description: This tutorial is a step-by-step guide that instructs users how to learn kinematic models of articulated objects only by using a webcam and a laptop.Keywords: articulation models, kinematic models, model fitting, structure selection, demo

Tutorial Level:

Contents

Introduction

Articulation models describe the kinematic trajectories of articulated objects, like doors, drawers, and other mechanisms. Such models can be learned online and in real-time, while a robot is pulling a door open. The articulation stack in the ALUFR-ROS-PKG repository provides several functions for fitting, selecting, saving and loading articulation models. This tutorial is a step-by-step guide that instructs users how to learn kinematic models of articulated objects only by using a webcam and a laptop.

Overview

The general idea of this demo is as follows

- The goal is to learn the kinematic model of an articulated object, for example a door, a drawer, a box, or anything else you want to use.

- By attaching checkerboard markers to the object, we can easily observe the 3D pose of the individual parts of the object.

- We use a low-cost webcam to grab continuously images of an articulated object being moved by a human.

- The checkerboard detector will subscribe to the camera images and publish pose messages of the detected parts

- A simple Python will collect the poses of the articulated object, and call a service for estimating the kinematic model and the kinematic structure of the object.

Download and Compile

For this tutorial, we need the articulation_tutorials package and its dependencies. We assume that you have already installed the basic components of ROS. You can check out the ALUFR-ROS-PKG repository at Google Code, by typing:

cd ~/ros svn co https://alufr-ros-pkg.googlecode.com/svn/trunk alufr-ros-pkg

For compilation, type

rosmake articulation_tutorials

which should build (next to many other things) the articulation_models and the articulation_structure package.

Change into the directory, by typing

roscd articulation_tutorials/webcam_demo

Attaching markers to your articulated object

In the articulation_tutorials/webcam_demo, you find various pdf files containing checkerboard markers in various sizes and dimensions. Choose one and print it. Then cut the checkerboard markers using scissors. Note that the detector needs at least a small white border around the checkerboard, i.e., don't cut too close to the pattern. Finally, attach these markers to your object.

markers-0.5cm-4x5-5x6-4x6-5x7.pdf

markers-1cm-4x5-5x6-4x6-5x7.pdf

markers-1cm-8x6-9x6-7x6-9x7.pdf

markers-2cm-4x5-5x6-4x6-5x7.pdf

markers-2cm-8x6-9x6-7x6-9x7.pdf

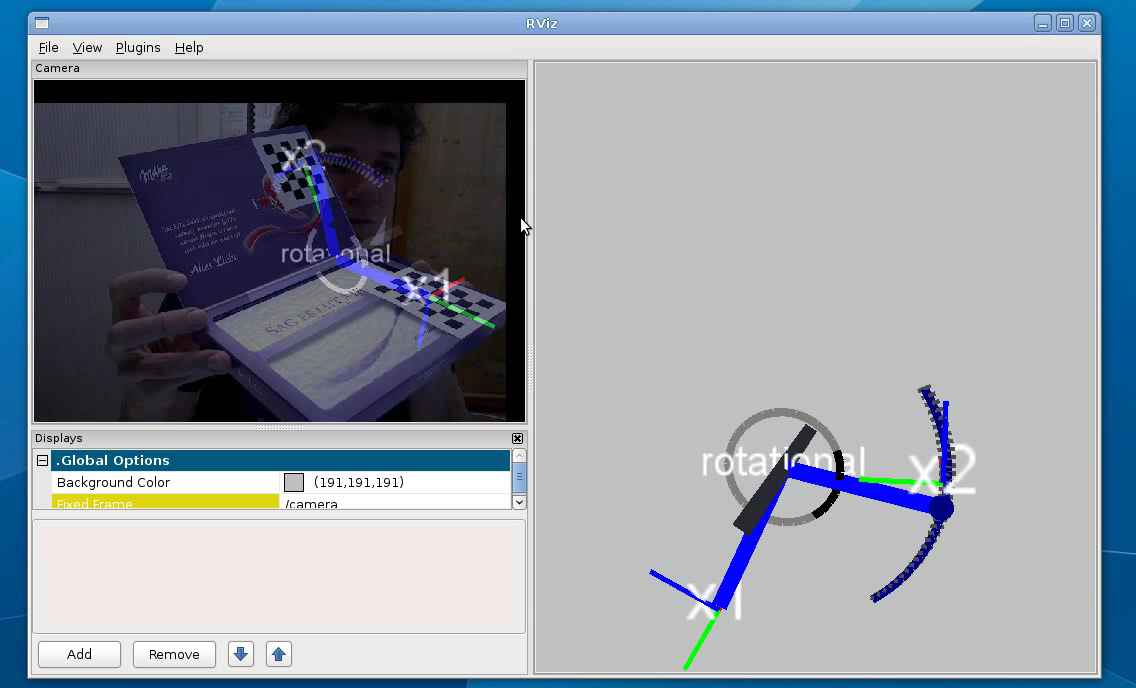

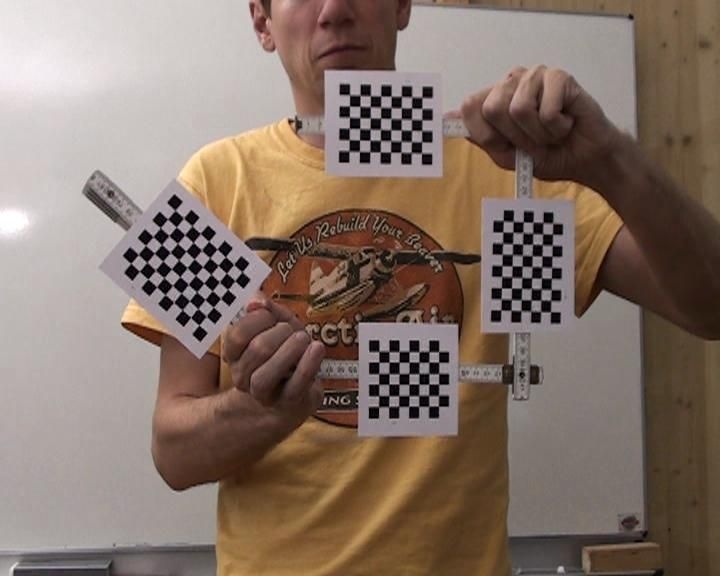

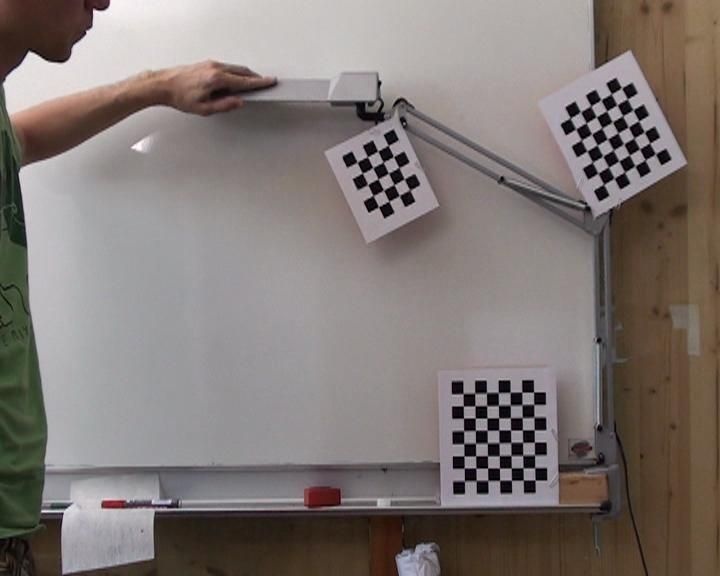

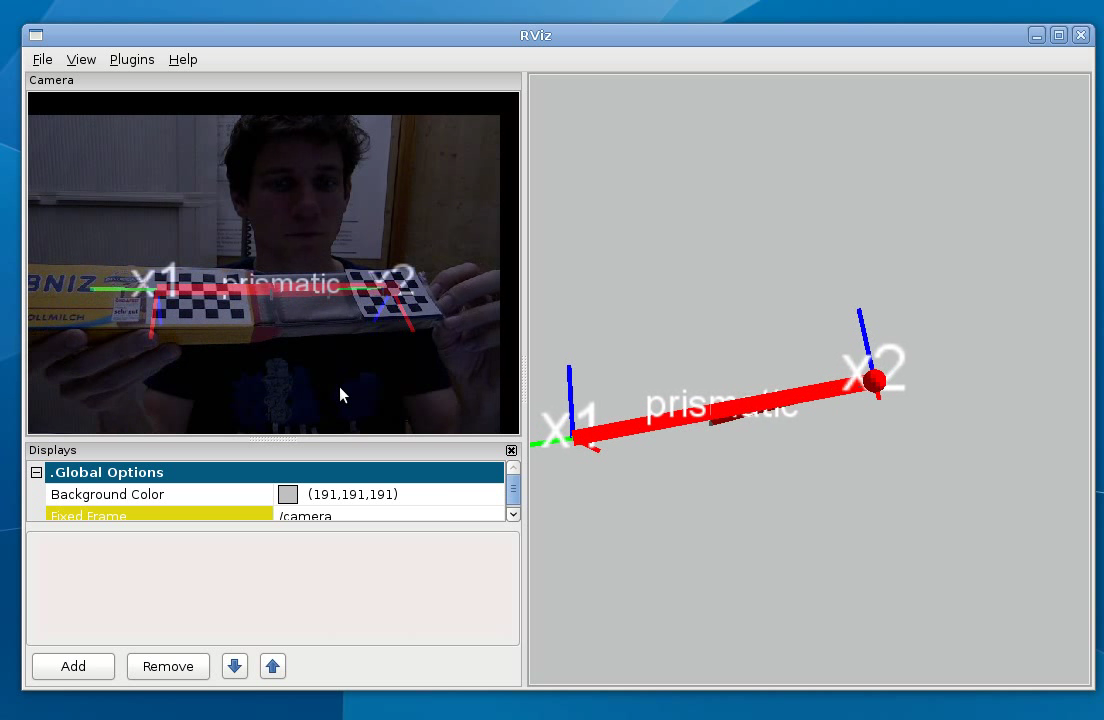

Finally, your articulated object might look like one of these:

The demonstration launch file

The articulation_tutorials/webcam_demo folder contains several launch files. Each of the launch file

brings up the webcam (uvc_cam2/sender)

starts the image processor (image_proc/image_proc)

launches a Python script to visualize the detected checkerboards (checkerboard_detector2/pose_visualizer.py)

brings up the checkerboard detector (checkerboad_detector2/checkerboard_detector2)

starts a small Pyhton script that collects the detected poses (articulation_structure/articulation_collector.py), and calls the model learning service

starts the process providing the model learning service (articulation_structure/structure_learner_srv)

- .. and starts RVIZ, in a configuration where it shows the image and the learned kinematic model

As a starting point, have a look at the webcam_demo-1cm-4x6-4x5.launch file. It starts all required nodes, sets up our calibrated Logitech camera, and configures the marker detector with two markers.

<launch>

<node name="uvc_cam" pkg="uvc_cam2" type="sender" output="log">

<param name="D" type="string" value="-0.0834232 0.120545 -0.0023918 0.0175383 0 "/>

<param name="K" type="string" value="578.252 0 350.204 0 575.115 207.606 0 0 1 "/>

<param name="R" type="string" value="1 0 0 0 1 0 0 0 1 "/>

<param name="P" type="string" value="578.252 0 350.204 0 0 575.115 207.606 0 0 0 1 0 "/>

<param name="device" type="string" value="/dev/video0"/>

<param name="width" type="int" value="640"/>

<param name="height" type="int" value="480"/>

<param name="fps" type="int" value="5"/>

</node>

<group ns="camera" clear_params="true">

<node name="image_proc" pkg="image_proc" type="image_proc" output="log"/>

<node name="pose_visualizer" pkg="checkerboard_detector2" type="pose_visualizer.py" output="screen"/>

<node pkg="checkerboard_detector2" type="checkerboard_detector2" respawn="false"

output="log" name="checkerboard_detector" machine="colorrado">

<param name="display" type="int" value="0"/>

<param name="rect0_size_x" type="double" value="0.01"/>

<param name="rect0_size_y" type="double" value="0.01"/>

<param name="grid0_size_x" type="int" value="4"/>

<param name="grid0_size_y" type="int" value="6"/>

<param name="rect1_size_x" type="double" value="0.01"/>

<param name="rect1_size_y" type="double" value="0.01"/>

<param name="grid1_size_x" type="int" value="4"/>

<param name="grid1_size_y" type="int" value="5"/>

</node>

</group>

<node name="articulation_collector" pkg="articulation_structure" type="articulation_collector.py" output="screen">

<param name="sigma_position" value="0.01"/>

<param name="sigma_orientation" value="0.1"/>

<param name="samples" value="50"/>

</node>

<node name="structure_learner" pkg="articulation_structure" type="structure_learner_srv" output="screen" machine="colorrado">

<param name="filter_models" value="rigid prismatic rotational"/>

</node>

<node pkg="rviz" type="rviz" output="screen" name="rviz" args="-d $(find articulation_tutorials)/webcam_demo/webcam_demo.vcg" />

</launch>In the following, we will briefly explain how you can modify the launch file to suit your needs.

Setting up your Webcam

The launch files in this tutorial assume that you are using a Logitech Webcam connected via USB and registered as /dev/video0. You can change the calibration info, the image resolution and framerate by adapting the values in the launch file.

<node name="uvc_cam" pkg="uvc_cam2" type="sender" output="log">

<param name="D" type="string" value="-0.0834232 0.120545 -0.0023918 0.0175383 0 "/>

<param name="K" type="string" value="578.252 0 350.204 0 575.115 207.606 0 0 1 "/>

<param name="R" type="string" value="1 0 0 0 1 0 0 0 1 "/>

<param name="P" type="string" value="578.252 0 350.204 0 0 575.115 207.606 0 0 0 1 0 "/>

<param name="device" type="string" value="/dev/video0"/>

<param name="width" type="int" value="640"/>

<param name="height" type="int" value="480"/>

<param name="fps" type="int" value="5"/>

</node>Alternatively, you can also use a completely different image source, but you will then need to modify the launch file accordingly. Make sure that your webcam publishes images to

#> rostopic list [..] /camera/camera_info /camera/image_raw [..]

For calibrating your webcam, have a look at the calibration tutorial.

Setting up your Markers

Further, you will have to set up the number of markers, their sizes (in meter) and their dimensions (measured in inner corners of the checkerboard). In principle, the detector supports any number of markers, but regarding speed we recommend that you use only up to four markers simultaneously.

The parameters are as follows:

rectN_size_x specifies the size in x-direction of a single square in meters

rectN_size_y specifies the same in y-direction

gridN_size_x specifies the number of inner corners in x-direction

gridN_size_y specifies the number of inner corners in y-direction

You can add as many rectN_size_x,rectN_size_y,gridN_size_x,gridN_size_y blocks as you wish, for accommodating additional markers.

<node pkg="checkerboard_detector2" type="checkerboard_detector2" respawn="false"

output="log" name="checkerboard_detector">

<param name="display" type="int" value="0"/>

<param name="rect0_size_x" type="double" value="0.01"/>

<param name="rect0_size_y" type="double" value="0.01"/>

<param name="grid0_size_x" type="int" value="4"/>

<param name="grid0_size_y" type="int" value="6"/>

<param name="rect1_size_x" type="double" value="0.01"/>

<param name="rect1_size_y" type="double" value="0.01"/>

<param name="grid1_size_x" type="int" value="4"/>

<param name="grid1_size_y" type="int" value="5"/>

</node>

Setting up the pose collector

The articulation_collector script collects the poses from the checkerboard detector. It keeps only the latest N=50 samples. Further, the articulation collector script requires two noise values.

samples gives the number of samples to keep in the history

sigma_position gives the positional noise assumption, measured in meters

sigma_orientation gives the orientational noise assumption, measured in radians.

<node name="articulation_collector" pkg="articulation_structure" type="articulation_collector.py" output="screen">

<param name="sigma_position" value="0.01"/>

<param name="sigma_orientation" value="0.1"/>

<param name="samples" value="50"/>

</node>

Setting up the kinematic model learner

Finally, the launch files instantiates the node that actually learns the kinematic model. This node fits all possible model classes to every pair of observed markers, and finally selects best kinematic model. This includes both the articulation models, and the kinematic structure (i.e., the connectivity of the individual parts). The node can be configured to use specific model classes only. filter_models is a string containing the active model classes, separated by spaces. It can be:

rigid for fitting rigid links

prismatic for fitting prismatic links (as in drawers)

rotational for fitting rotational links (as in doors, hinges, etc)

pca_gp for fitting a non-parametric joint (for almost anything else, like a garage door, a 2-bar linkage, etc.)

In principle, more models could be added, for example a screw joint with 1-DOF, a spherical joint with 2-DOF, or a free-floating joint with 6-DOF.

<node name="structure_learner" pkg="articulation_structure" type="structure_learner_srv" output="screen">

<param name="filter_models" value="rigid prismatic rotational"/>

</node>

Run the Demo

Finally, launch the file, by typing

roslaunch webcam_demo-1cm-4x6-4x5.launch

Demo videos

articulation-leibniz-cookies.avi

articulation-leibniz-cookies.avi