| |

How to use the recognizer

Description: Infer an actual scene from an unknown scene and show results (terminal and rviz).Tutorial Level: BEGINNER

Contents

Setup

1. Make sure you have objects as input, e.g.: start an object detector (asr_fake_object_recognition etc.) and publish the objects on the topic you have chosen with the "objectTopic" parameter at capturing.yaml. For asr_fake_object_recognition, object configurations which match the sample databases we provide, can be found its subfolder /config.

2. Check if you have set the parameters to your own topic or use the default parameters.

3. Adapt the "dbfilename" parameter in sqlitedb.yaml. Choose the datapath where your trained database is saved. asr_ism comes pre-trained databases in the subfolder "scene_recordings", the poses of which were recorded relative to the /map frame.

Tutorial

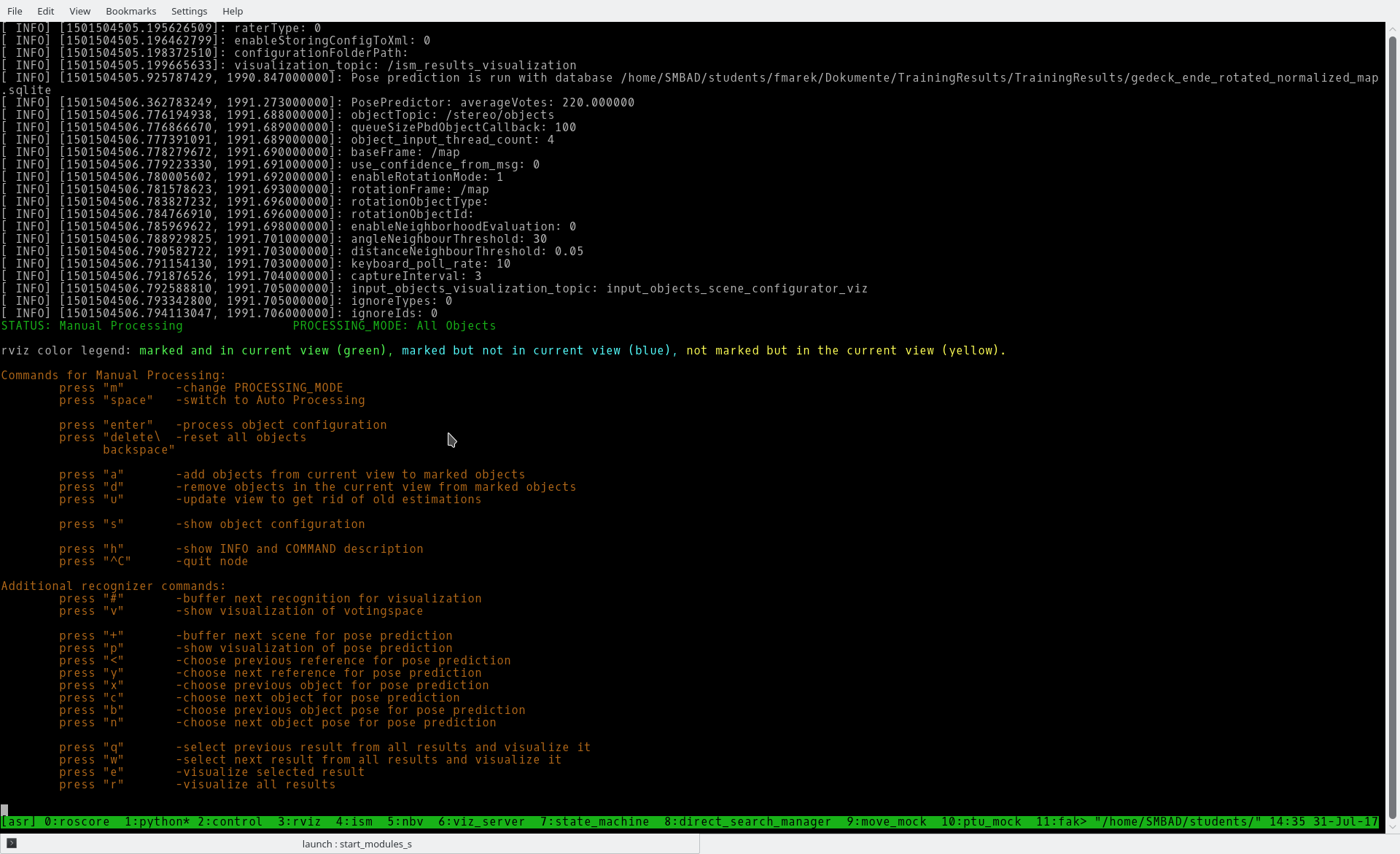

1. Start the recognizer

roslaunch asr_ism recognizer.launch

You will see all commands of the recognizer. With press "h" you can show them again.

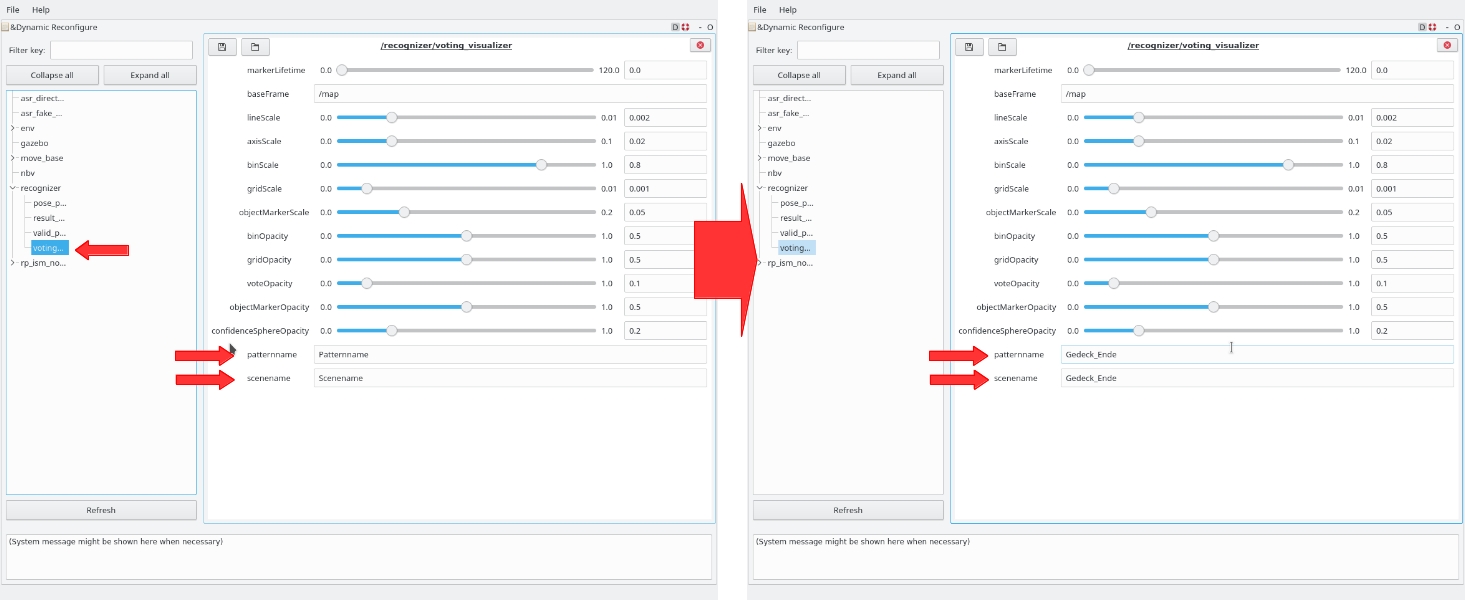

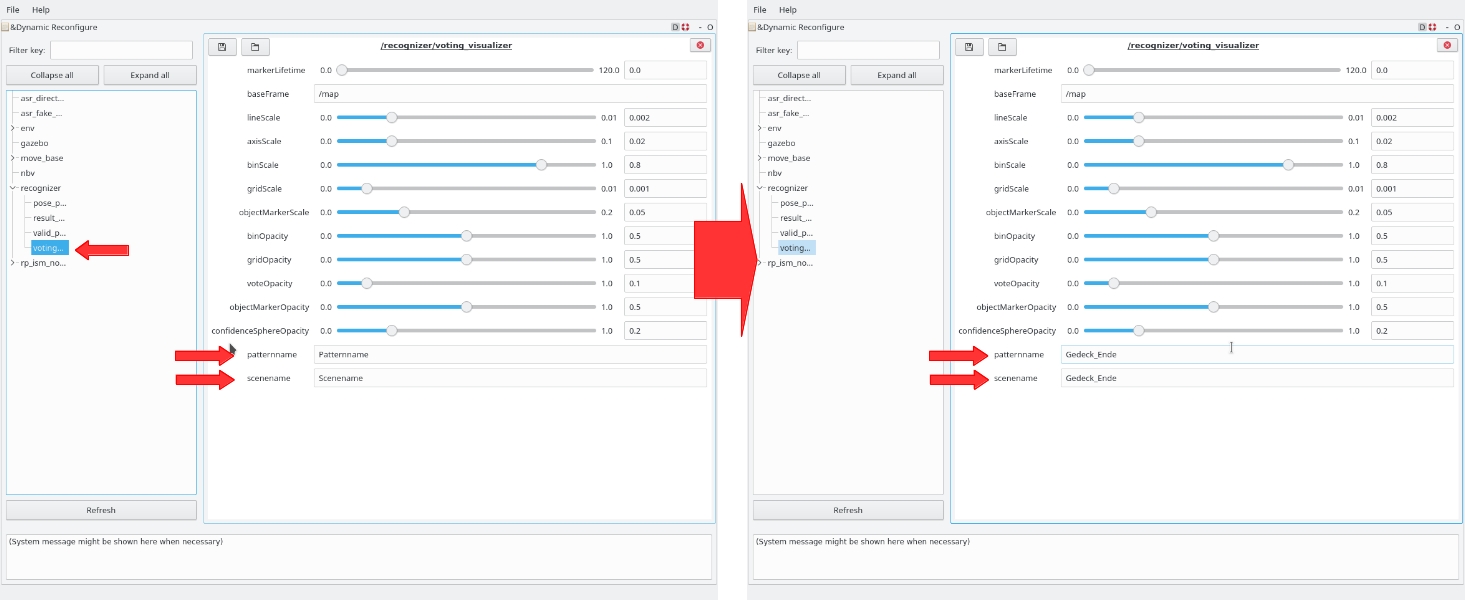

2. rqt_reconfigure

2.1 Start in new console rqt_reconfigure

rosrun rqt_reconfigure rqt_reconfigure

2.2. Go to recognizer -> voting_visualizer and adapt Pattername and Scenename (picture 1 below). Choose the names that are set in your database (picture 2 below). An easy way to access the database is the sqlitebrowser application.

3. rviz

3.1. Start rviz

3.2. Add one MarkerArray with topic "/ism_results_visualization".

3. Place your robot in front of the to be recognized scene.

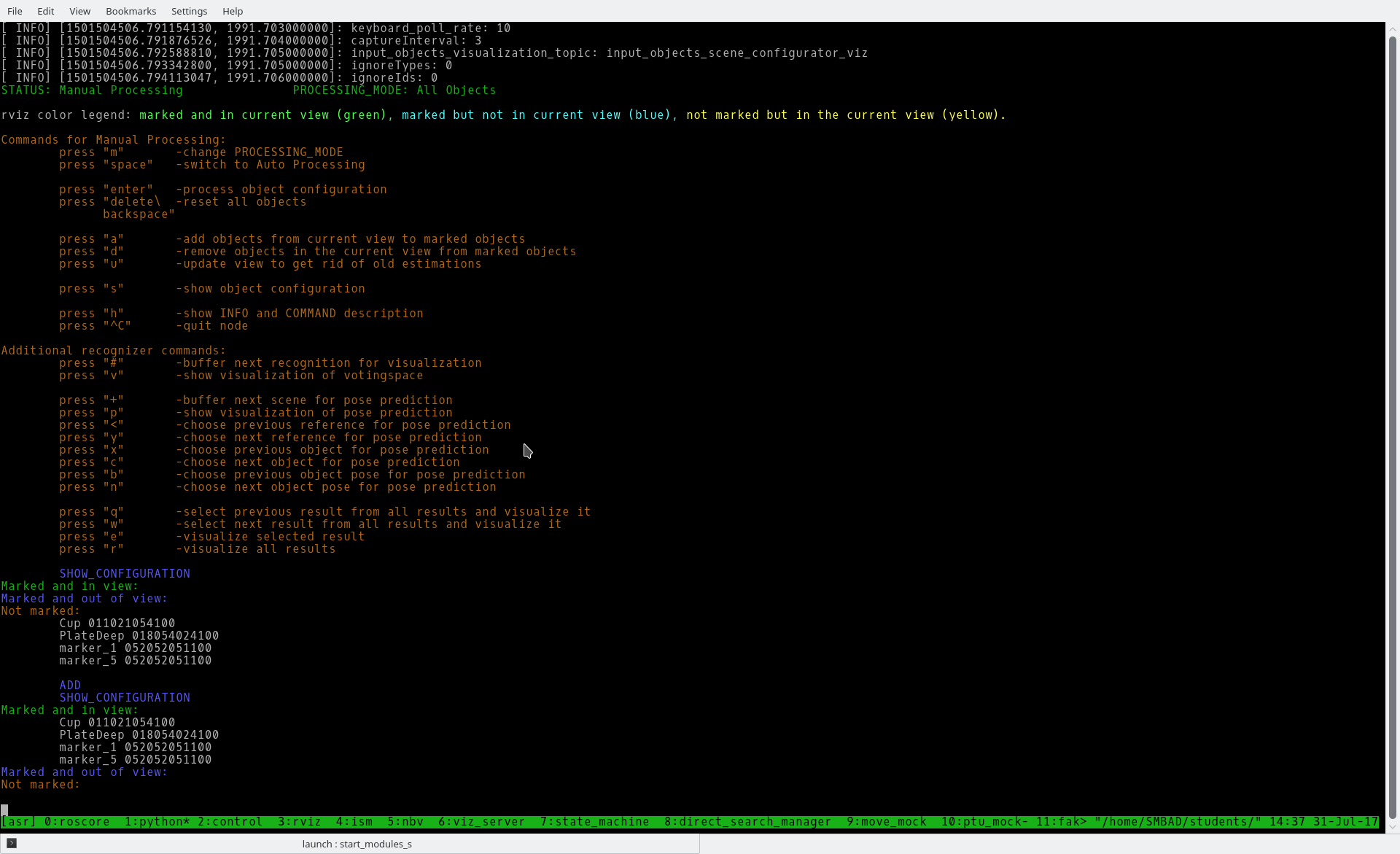

4. Press "s" to show all recognized objects.

5. Press "a" to mark all recognized objects (needed for the scene recognition). Only marked objects are used for recognition.

That you will see if you press "s", "a" and then "s" again (of course with other object names).

6. Press "enter"

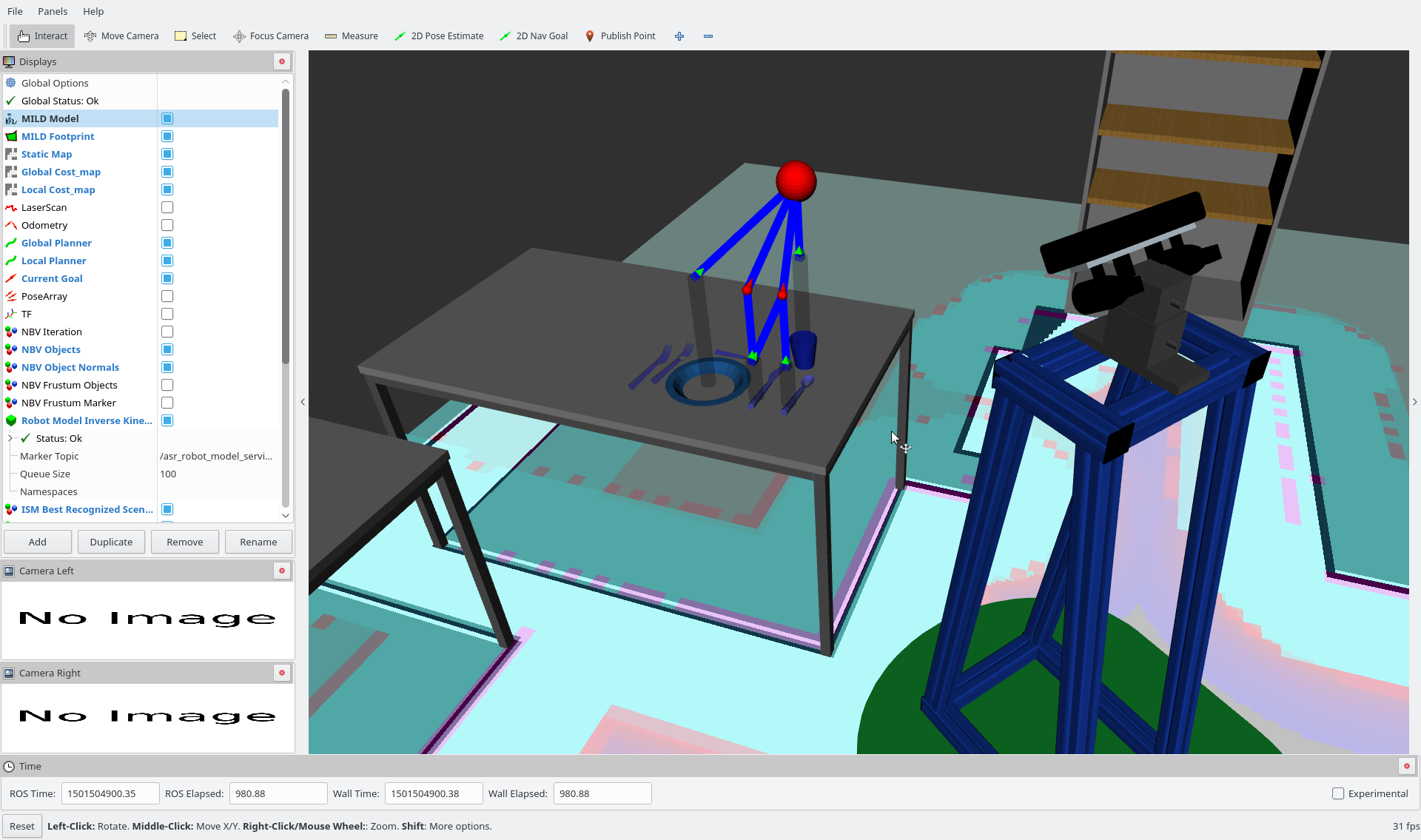

7. Now you can see an ism-tree with the recognized object in the recognized scene.

8. Now you can choose between visualisations

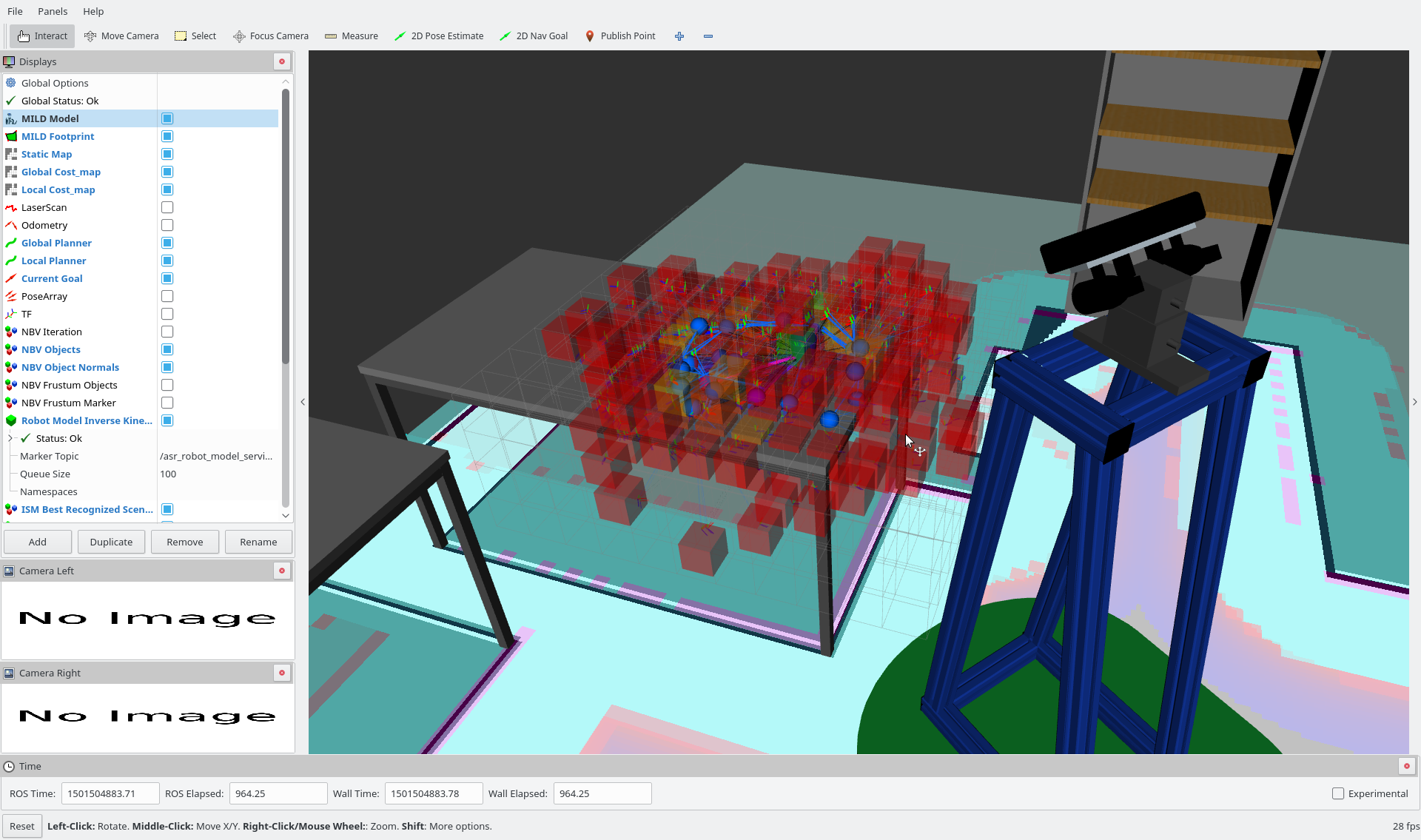

8.1. The first visualisation will show you the votingspace

8.1.1. Press "#", than "enter" again, and than "v" to visualise it.

The voting space could look like this (the red, orange cubes).

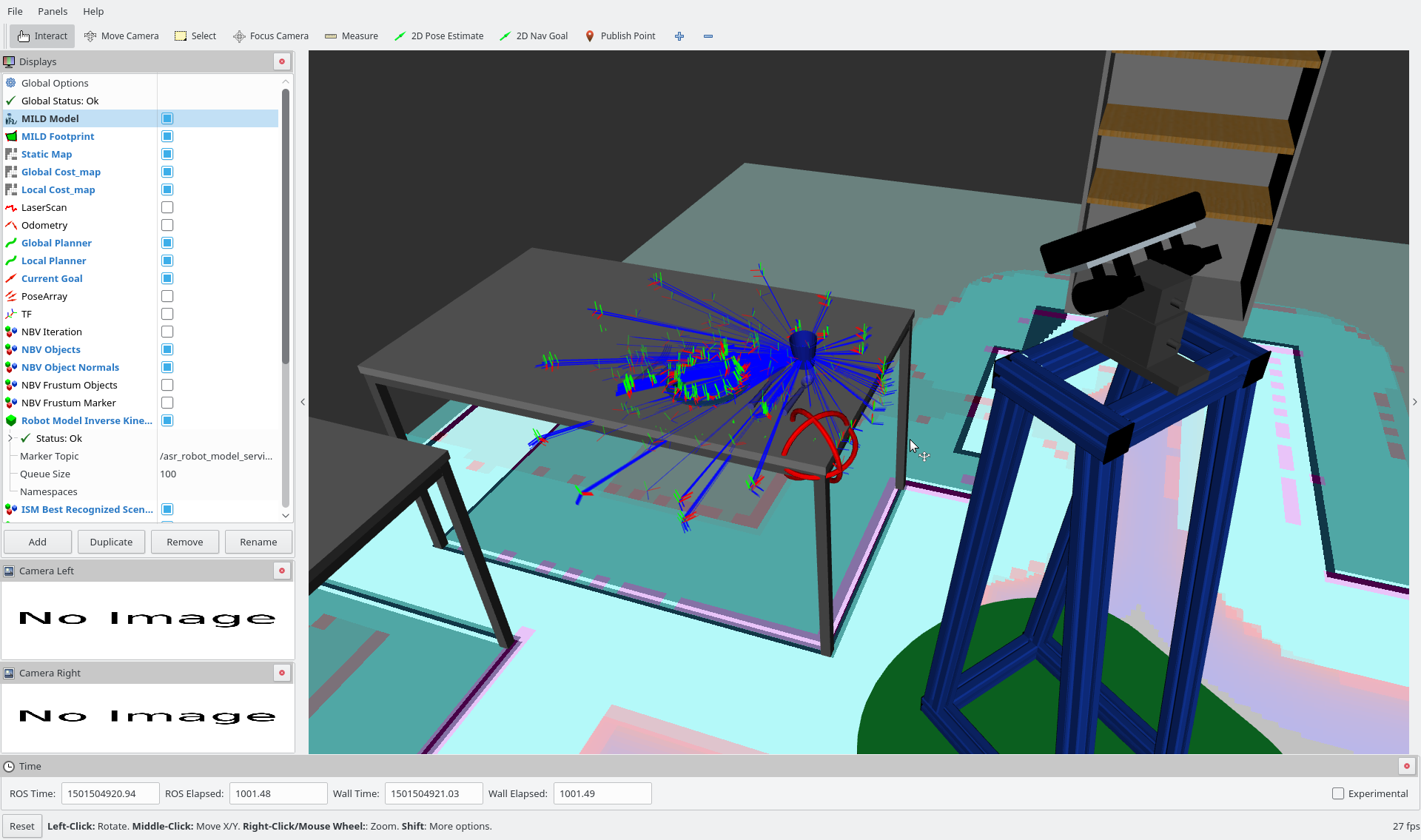

8.2. The second visualisation will show you the pose predictions of the not recognized objects.

8.2.1 Press "+", than "enter" again, than "p".

The blue lines will show you where the object can be placed. At the red sphere you will see one actual prediction. To switch between predicted objects and their predicted pose you can use y,x,c,b,n, like described in the helper (activate it with pressing "h").

Common mistakes

There could be an error:

[Error] [...]: Lookup would require extropolation ...

This is caused because your PC is too slow, but it isn't a problem. You can ignore this error.

[ERROR] [1507807943.419082673]: Votingspace doesn't contain pattern wrong_name.Skipping visualization.

The name featured in dynamic reconfigure in "recognizer" -> "voting_visualizer" -> "patternname" is not the same as in your sqlitedatabase. Check step 2.2 to get the name featured in your database.