| |

Setting up the asr_recognizer_prediction_ism package

Description: In this tutorial you will configure the asr_recognizer_prediction_ism package so that it can be used with the other ASR packages.Tutorial Level: BEGINNER

Contents

Description

In this tutorial you will configure the asr_recognizer_prediction_ism package so that it can be used with the other ASR packages.

Setup

You must start the asr_world_model and asr_object_database packages so that the asr_recognizer_prediction_ism package can work correctly.

Tutorial

In order to launch the rp_ism_node (rp is a shortcut for 'recognizer prediction') you must first set the database that it should use. The configuration files are located in the directory asr_recognizer_prediction_ism/param/. The file scene_recognition.yaml contains the commented line

#dbfilename: PATH/DBNAME.sqlite

at the top. Uncomment this line and replace the path with a path to a trained SQLite database containing information about learned scenes, e.g.:

dbfilename:/home/user/database.sqlite

You can use one of the databases in the asr_resources_for_active_scene_recognition package in the scene_recordings directory.

In addition, it might be necessary to change the sensitivity values:

In the file scene_recognition.yaml, there is a section called 'sensitivity'. There are two values that can be changed:

- bin_size: this value states how much the position of an object can deviate from the position in the database and still be counted as part of the scene

- maxProjectionAngleDeviation: this value states how much the orientation of an object can deviate from the orientation in the database and still be counted as part of the scene

It may be useful to adjust these values if objects with small deviations aren't counted as part of a scene or if objects with big deviations still are counted as part of a scene.

If there are rotation invariant objects in the database, you may want to change the options for rotation invariant objects in the file scene_recognition.yaml. If you set the enableRotationMode parameter to 1, rotation invariant objects which were found will be rotated to the rotationFrame. If you set it to 2, they will be rotated to another object (which shouldn't be rotation invariant) with the object type rotationObjectType and the object ID rotationObjectId.

To configure the prediction, you can change the file pose_prediction.yaml. If you want to change the number of predicted objects, you can use the predictionGenerationFactor parameter. You can also configure how many recognition results should be drawn by importance sampling to predict object poses by changing the importance_resampled_size parameter.

When you have finished configuring, you can start the rp_ism_node by calling roslaunch asr_recognizer_prediction_ism rp_ism_node.launch.

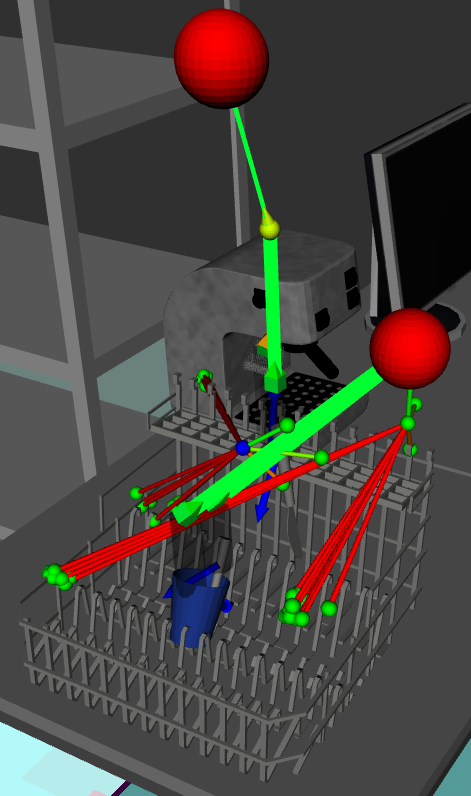

To visualize the computations of the rp_ism_node in rviz, you can subscribe the topics /ism_results_visualization, which shows the recognized scenes, /ism_results_for_pose_prediction_viz, which shows sampled scene recognition results that were used for pose prediction, and /pose_prediction_results_visualization, which shows the predicted poses for the missing objects. For this image, the topic /env/asr_world_model/found_object_visualization was also used, so that the found objects were also shown.