| |

Do scene recognition on a real robot

Description: In this tutorial we do the recognition of a scene on a real robot.Tutorial Level: BEGINNER

Contents

Description

In this tutorial we do the recognition of a scene on a real robot.

Setup

Make sure you are using the robot as master, to do this you have to export the following environment variables:

export ROS_MASTER_IP=http://{ip}:{port} (IP of the robot)

export ROS_MASTER_URI=http://{ip}:{port} (URI of the robot)

- export ROS_HOSTNAME=$(hostname) (this is your hostname)

Do the following steps on your robot (e.g. via SSH):

Set the following settings in asr_next_best_view/param/next_best_view_settings_real.yaml:

radius: set it to "0.15". This is the default value which should work fine for most users, as the radius gets adapted during the calulation anyways.

It defines how fine grained the floor is scanned for the next robot position.sampleSizeUnitSphereSampler: set it to "384". This is the default value which should work fine for most users.

It defines how many possible camera orientations are computed. If you set it too low, the robot might miss objects because it doesn't take some orientations into account. Lower the value if the calculation takes too long.colThresh: The default value is "40", but it depends on your robot and your environment.

It defines how close the robot moves to any objects. Increase the default if you want the robot to move closer to objects, but be aware that if you set it too low, the robot might collide with objects. For further information about the threshold, take a look at http://wiki.ros.org/costmap_2d#Inflation

Set the following settings in asr_state_machine/param/params.yaml:

mode: set it to "2" (indirect search)

- If you fail to recognize the scene, try to increase "bin_size" and "maxProjectionAngleDeviation" in asr_recognizer_prediction_ism/param/scene_recognition.yaml. This defines the allowed deviation of object position ("bin_size", in meter) and rotation ("maxProjectionAngleDeviation", in degree) of the detected objects against the recorded object position/rotation.

Set dbfilename in asr_recognizer_prediction_ism/param/scene_recognition.yaml to the sqlite database of your recorded scene.

run: "rosrun asr_resources_for_active_scene_recognition start_modules_real.sh" and make sure all tmux windows are set up correctly and don't show errors (see section 3 in AsrResourcesForActiveSceneRecognition on how the tmux windows should look like, especially pay attention to the "move" window)

- Open another tmux window and run "rosrun asr_resources_for_active_scene_recognition start_recognizers.sh". Depending on your setup you may need to do this directly on your robots GUI (no via SSH).

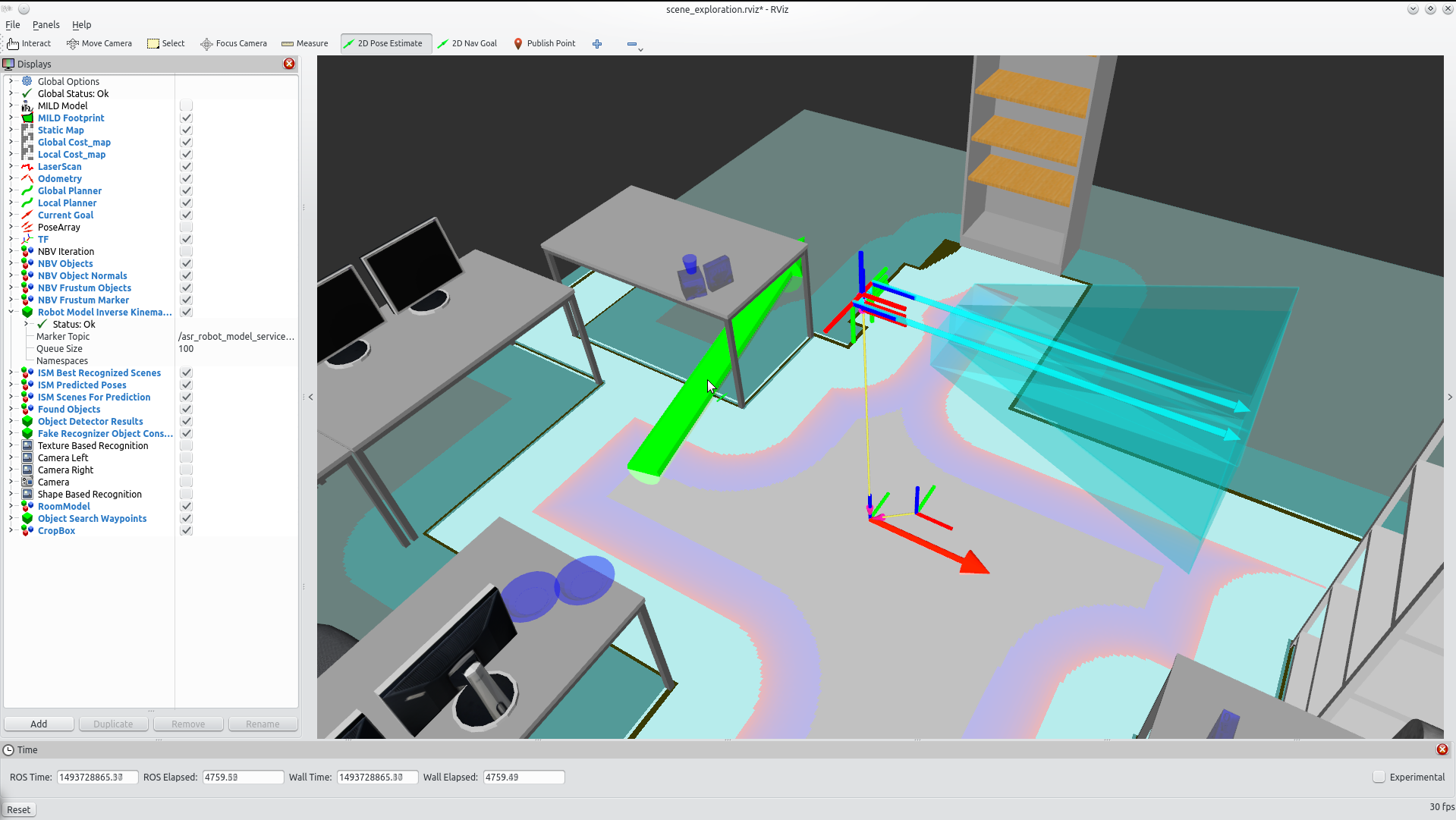

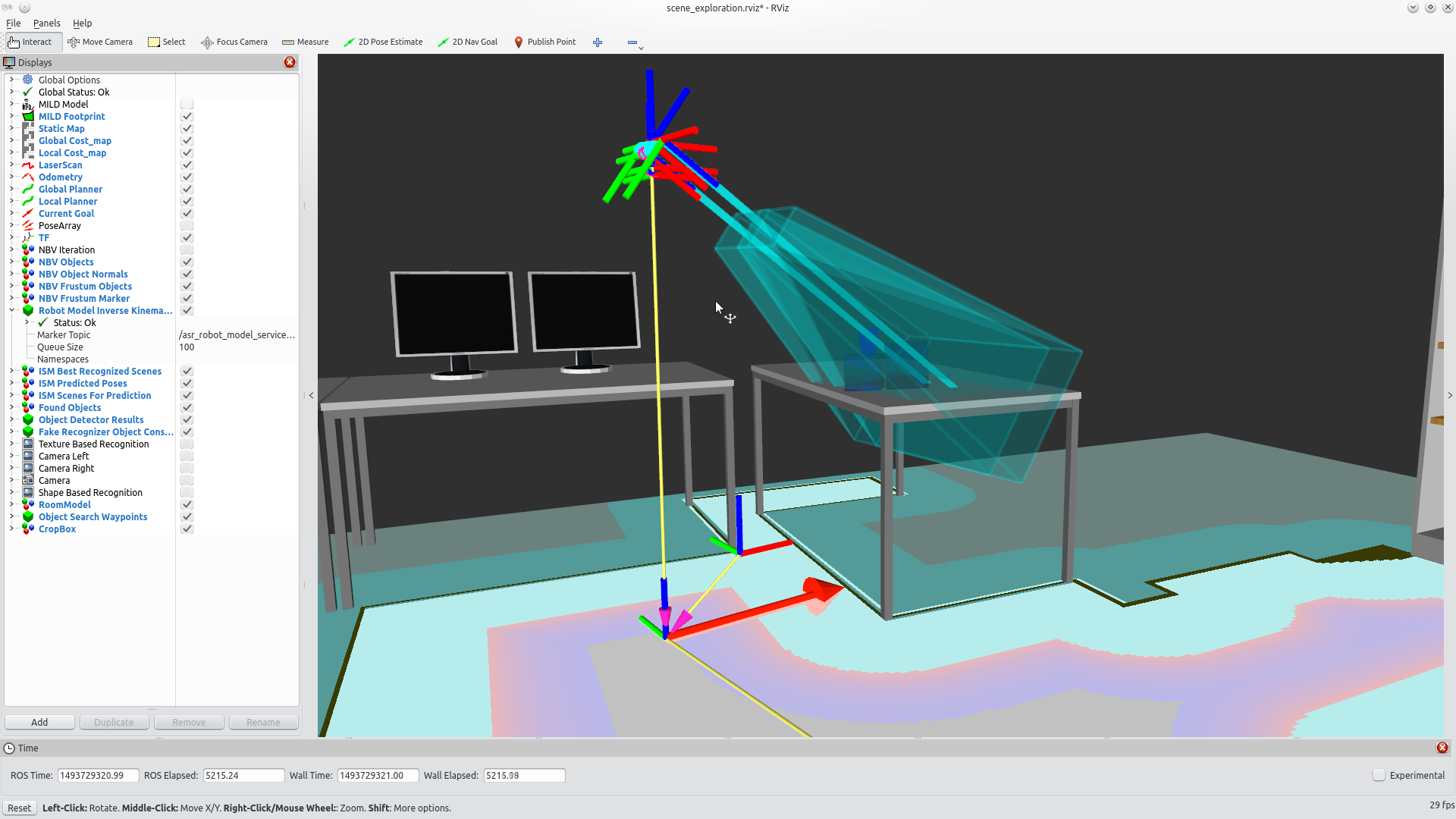

- on your local machine, launch rviz: roslaunch asr_resources_for_active_scene_recognition rviz_scene_exploration.launch.

Tutorial

1. in rviz, use "2D Pose Estimate" to set the robot position in rviz to match reality as close as possible

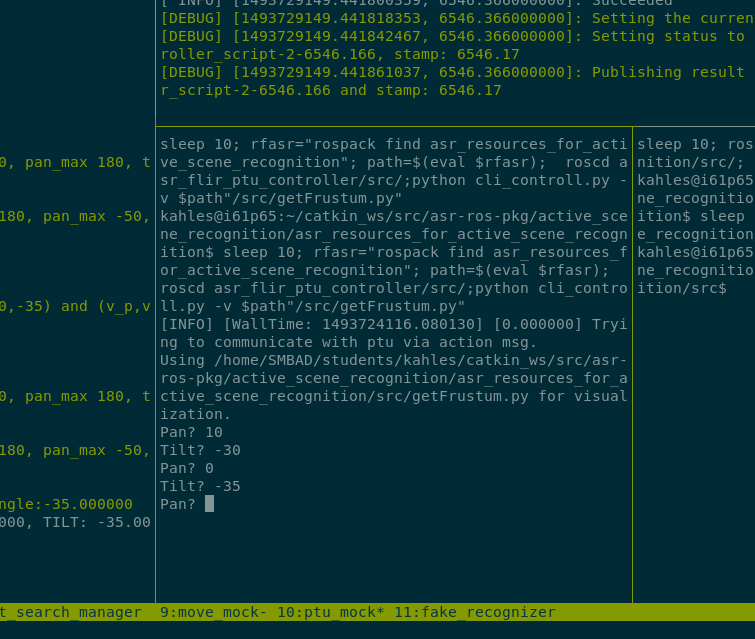

2. Use the lower tight panel in the tmux window "ptu" which says "Pan?" to move the ptu. First enter the pan value, confirm with enter, then enter the tilt value, confirm with enter. Now the ptu should move to the goal, which can be seen by a new blue frustum. If the values are invalid nothing will happen and you'll get error messages on other panels in the "ptu" window.

3. Enter the rviz state_machine window and press enter

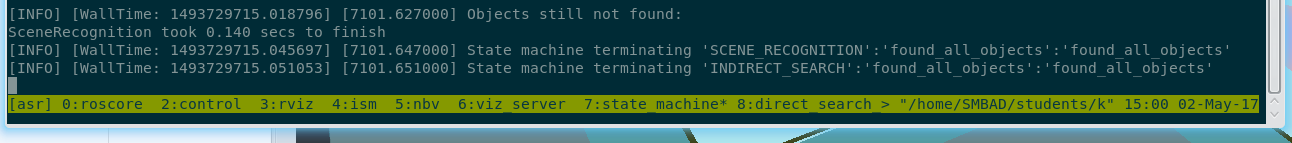

4. Wait some minutes until the robot has found all objects. You can watch this process it in rviz. After it has found all objects, the state machine terminates with return code "found_all_objects"

5. To get a list of all objects in a scene, execute: rosservice call /env/asr_world_model/get_all_objects_list "{}"