Show EOL distros:

Package Summary

This package implements the logic of our Online World Model, while the Offline Knowledge Base is distributed across asr_object_database, mild_navigation and asr_visualization_server. It contains four types of information: 1. All estimations of objects which were localized by object detection (object models) 2. All views in which objects of given types have been searched due to advice of asr_next_best_view and asr_direct_search_manager 3. All recognized complete patterns by the recognizer_prediction_ism (scene models) 4. Some additional information about the Objects: IntermediateObjectWeight, RecognizerName

- Maintainer: Meißner Pascal <asr-ros AT lists.kit DOT edu>

- Author: Aumann Florian, Borella Jocelyn, Hutmacher Robin, Karrenbauer Oliver, Meißner Pascal, Schleicher Ralf, Stöckle Patrick, Trautmann Jeremias

- License: BSD

- Source: git https://github.com/asr-ros/asr_world_model.git (branch: master)

Package Summary

This package implements the logic of our Online World Model, while the Offline Knowledge Base is distributed across asr_object_database, mild_navigation and asr_visualization_server. It contains four types of information: 1. All estimations of objects which were localized by object detection (object models) 2. All views in which objects of given types have been searched due to advice of asr_next_best_view and asr_direct_search_manager 3. All recognized complete patterns by the recognizer_prediction_ism (scene models) 4. Some additional information about the Objects: IntermediateObjectWeight, RecognizerName

- Maintainer: Meißner Pascal <asr-ros AT lists.kit DOT edu>

- Author: Aumann Florian, Borella Jocelyn, Hutmacher Robin, Karrenbauer Oliver, Meißner Pascal, Schleicher Ralf, Stöckle Patrick, Trautmann Jeremias

- License: BSD

- Source: git https://github.com/asr-ros/asr_world_model.git (branch: master)

Contents

Description

This package implements the logic of our Online World Model, while the Offline Knowledge Base is distributed across asr_object_database, mild_navigation and asr_visualization_server. It contains four types of information:

- All estimations of objects which were localized by object detection (object models)

All views in which objects of given types have been searched due to advice of asr_next_best_view and asr_direct_search_manager

All recognized complete patterns by the asr_recognizer_prediction_ism (scene models)

Some additional information about the objects: IntermediateObjectWeight and RecognizerName

Functionality

The asr_world_model is used for the active_scene_recognition, by storing data of different topics. In some cases there is, beside the storage, some functions on the data. The data can be devided into four fields.

Found Objects

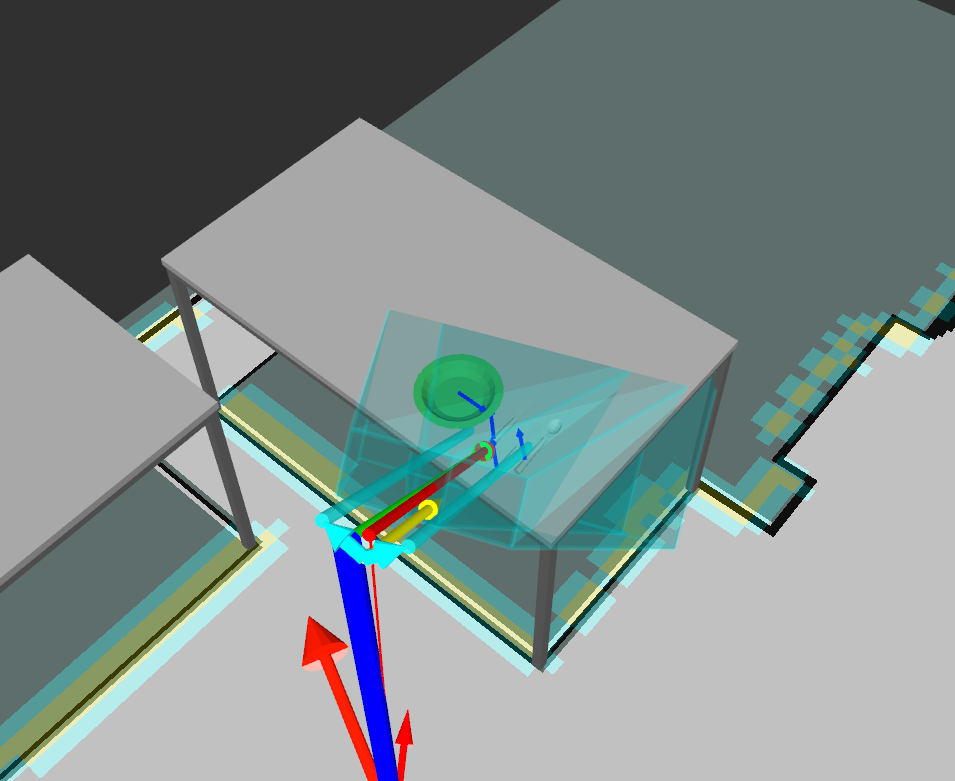

All found objects detected by the asr_state_machine will be pushed to the asr_world_model. To handle false positive object detections, clusters of the found object poses will be formed. Object detections which are near by each other will form a cluster. An object will be seen as recognized if the size of the cluster is above a specific threshold (object_rating_min_count). In the following picture the plate and two dishes have been recognized.

To handle variations in found object positions and orientations it is possible to sample around the found objects. There are different parameters to adjust the sampling in position and orientation. Each additional sample will be saved and seen as a normal object detection. The asr_recognizer_prediction_ism can use the samples to predict object hypothesis for the different variations.

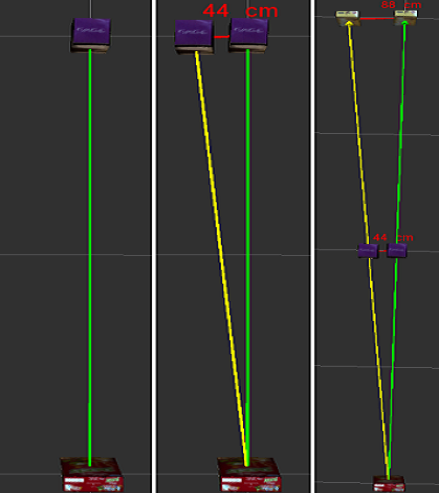

There are two different ways to set the range of the sampling deviations. One way is to read the deviation from the asr_object_database. There it is possible to specify each axis differently for each object. In the second mode the deviations will be calculated in the same way for every object. The deviations will depend on the params bin_size and maxProjectionAngleDeviation from the asr_recognizer_prediction_ism.

The sampling will take place in each dimension defined. If the sampling takes place in all 6 dimensions the number of samplings will explode really fast. Normally the sampling in position is not so important. The sampling in orientation will be neccessary if the relations to the other objects are long, because the angular error will grow over the distance.

The sampled poses can be used by the asr_recognizer_prediction_ism in the scene_recognition. There is a parameter objectSetMaxCount in the asr_recognizer_prediction_ism to cap the maximum amount of used poses per object. This is neccessary because the scene_recognition doesn't scale good with large number of poses at the moment. The param should be set to (#Samples)^(#InputObjects).

Viewports

During the 3D-object-search different viewports will be taken. The views can come from different sources like the asr_next_best_view and asr_direct_search_manager. To prevent them for searching for objects from similar perspectives multiple times, the views will be managed here.

Complete Patterns

All recognized complete patterns/scenes by the asr_recognizer_prediction_ism will be saved in and can be accessed from the asr_world_model.

Common Information

There are different common information in the asr_world_model. One is the list of all searched_object_types. For each it is possible to access the RecognizerName and the IntermediateObjectWeight (generated by the asr_intermediate_object_generator). The different information can be read automatically from the database (set in the asr_recognizer_prediction_ism) or a manually created in a world_description.

Usage

The asr_world_model is made for the active_scene_recognition and is used from differently packages. The asr_state_machine is the starting point for the 3D-object-search.

Needed packages

Needed software

Start system

roslaunch asr_world_model world_model.launch

ROS Nodes

Published Topics

env/asr_world_model/found_object_visualization (visualization_msgs/MarkerArray): Visualisation of the objects determined as found

Parameters

Launch file

asr_world_model/launch/world_model.launch

intermediate_object_weight_file_name: the path were the intermediate_object_weights XML file should be located. If there is an XXX in the name, the XXX will be replaced with the current database name. So the name has not to be changed manually after a database change.

yaml file

asr_world_model/param/world_model_settings.yaml

enable_object_sampling: Sampling additional object poses for recognition result to consider pose uncertainty calculate_deviations: If true, the deviations for sampling a found object will be calculated depending on asr_recognizer_prediction_ism/bin_size and asr_recognizer_prediction_ism/maxProjectionAngleDeviation, otherwise they will be loaded from the asr_object_database

deviation_number_of_samples_position: The number of additional object poses per x-, y- and z-axis * 2 (* 2, because it will be samples in +axis and -axis) deviation_number_of_samples_orientation: Analog for orientation

objects_to_sample: Specify the object(s) (type and id) which should be sampled to reduce the combinatorial explosion. Leave blank ("") to sample all objects. Example: !HMilkBio,0;MultivitaminJuice,0;

object_position_distance_threshold: Threshold Vars (Determines the maximum distance between two poses to be considered a neighbor) object_orientation_rad_distance_threshold: Analog for orientation

viewport_position_distance_threshold: Thresholds when two viewports will be seen as approx equale (will be used for FilterViewportDependingOnAlreadyVisitedViewports) viewport_orientation_rad_distance_threshold: Analog for orientation

object_rating_min_count: The minimum number of neighbors that a cluster needs to consider an object as detected

use_default_intermediate_object_weight: If true, the file from the asr_intermediate_object_generator will not be used nor generated. All weights will be the same. If false, the generated file of the asr_intermediate_object_generator will be used. If the file is not present, the asr_intermediate_object_generator will be executed during start_up at runtime. default_intermediate_object_weight: The default_intermediate_object_weight will be used if use_default_intermediate_object_weight is true or the asr_intermediate_object_generator does not work right

use_world_description: If true, the object_count, intermediate_object_weights and recognizer_name will be parsed from the world_description, otherwise the information will be gained from the SQL-database

debugLevels: The debug can be configured with different topics. Currently available are: ALL, NONE, PARAMETERS, SERVICE_CALLS, COMMON_INFORMATION, FOUND_OBJECT, VIEW_PORT, COMPLETE_PATTERN

world_description (optional)

The world_description will only be used if use_world_description == true. Otherwise, the information will be parsed from the SQL-database. The world_description can be used when the database is incomplete or for testing purpose, e.g. to search only for a subset of objects. To specify the world_description open (maybe make backup of current version before):

rsc/world_descriptions/world_description.yaml

Following information can be inserted:

recognizers_string_map: all pairs of object_types and recognizer_names weight_string_map: all pairs of object_types and intermediate_object_weights object_string_map: all pairs of object_types, object_ids and their object_count (number of same objects present in the world)

The same object_types should be defined for each map. The order of object_types in each map is not relevant. The Id represents the color for segmentable objects. The default id for other objects is 0. Following format has to be considered (example for two objects):

recognizers_string_map: "object_type_1,recognizer_name;object_type_2,recognizer_name" weight_string_map: "object_type_1,intermediate_object_weight;object_type_2,intermediate_object_weight"

object_string_map: "object_type_1&object_id_1,object_count;object_type_2&object_id_2,object_count"

Needed Services

/asr_object_database/object_meta_data

/asr_object_database/recognizer_list

Provided Services

Viewports:

PushViewport (push_viewport)

- viewport to push

EmptyViewportList (empty_viewport_list)

remove all viewports of the given object_type. If object_type is "all" or "", all viewports will be removed

GetViewportList (get_viewport_list)

get all viewports of the given object_type. If object_type is "all" or "", all viewports will be returned

FilterViewportDependingOnAlreadyVisitedViewports (filter_viewport_depending_on_already_visited_viewports)

filters the object_type_name_list of the given viewport if there are other viewports which are approx equale to the given one

- returns the filteredViewport and a flag isBeenFiltered if the were approx equale viewports

FoundObjects:

PushFoundObject (push_found_object)

- found object to push

PushFoundObjectList (push_found_object_list)

- found object_list to push

- Empty (empty_found_object_list)

- removes all found objects and their sampled poses

GetFoundObjectList (get_found_object_list)

- get all found objects and their sampled poses if sampling is activated

VisualizeSampledPoses (visualize_sampled_poses)

visualize all sampled poses for the object with the given object_type and object_id

CompletePatterns:

PushCompletePatterns (push_complete_patterns)

completePatterns to push (CompletePattern.msg with patternName and confidence)

EmptyCompletePatterns (empty_complete_patterns)

- removes all completePatterns

GetCompletePatterns (get_complete_patterns)

- gets all completePatterns

CommonInformation:

GetMissingObjectList (get_missing_object_list)

- gets a list of all objects (type and id) which are missing (works with multiple instances of the same object)

GetRecognizerName (get_recognizer_name)

gets the recognizer_name for the given object_type

GetAllObjectsList (get_all_objects_list)

- gets a list of all objects (type and id) and the scenePath (path to the database)

GetIntermediateObjectWeight (get_intermediate_object_weight)

gets the intermediate_object_weight for the given object_type (see asr_intermediate_object_generator for more information)