| Note: This tutorial assumes that you have completed the previous tutorials: Intrinsic calibration of the Kinect cameras, Getting started with camera_pose_calibration. |

| |

Calibrating the Kinect depth camera to an external RGB camera

Description: This tutorial demonstrates how to register the Kinect depth image to an external RGB camera instead of the built-in one. This requires an extrinsic calibration, calculating the relative transform between the two cameras.Tutorial Level: INTERMEDIATE

Contents

Attaching an external RGB camera

The Kinect's built-in RGB camera is more than adequate for many purposes, but sometimes we want more. Higher-end cameras can offer better image quality. OpenNI offers little control over important camera features like exposure, gain, and white balance. If necessary, it is possible to register the Kinect's depth camera to an external RGB camera and build color point clouds using it instead of the built-in RGB camera.

See camera_drivers for a listing of cameras with existing ROS drivers.

First you must rigidly mount your chosen RGB camera to the Kinect, preferably as close as possible to the IR camera. The greater the distance between the two, the more problems you will have with occlusion (one camera sees things the other can't). Not surprisingly, the built-in RGB camera occupies the sweet spot between the IR camera and IR projector, where occlusion issues are minimized. You can get fairly close mounting directly above or below the IR camera, however.

Registration has two requirements:

- Intrinsic calibration - the internal parameters of both cameras (focal length, distortion, etc.).

- Extrinsic calibration - the locations of the cameras with respect to each other in space.

Intrinsic calibration

You should have calibrated the depth camera intrinsics in the previous tutorial.

Perform the monocular calibration of the external RGB camera as well.

Extrinsic calibration

We will use the camera_pose_calibration package to determine the relative poses of the two cameras based on mutual views of a checkerboard target. The transform will then be broadcast over tf. Make sure its stack is installed:

# Electric sudo apt-get install ros-electric-camera-pose # Fuerte sudo apt-get install ros-fuerte-camera-pose

See the camera_pose_calibration Getting Started guide for more information on the following steps, presented briefly here.

Create and bring up a launch file which:

- Opens the Kinect (and its various processing nodelets)

- Opens your external camera

Starts image_proc for the external camera

Starts the extrinsic tf publisher

<launch>

<!-- Bring up Kinect -->

<include file="$(find openni_launch)/launch/openni.launch">

<!-- Set cam info URLs, if not in the default location -->

</include>

<!-- BRING UP EXTERNAL CAMERA HERE -->

<!-- Also bring up image_proc for it, to get image_rect topic.

In Electric, assuming camera namespace /my_camera: -->

<node ns="my_camera" name="external_proc" pkg="image_proc" type="image_proc" />

<!-- In Fuerte, you can instead load image_proc as nodelets into the

OpenNI nodelet manager: -->

<include ns="my_camera" file="$(find image_proc)/launch/image_proc.launch">

<arg name="manager" value="/camera_nodelet_manager" />

</include>

<!-- Extrinsic transform publisher -->

<include file="$(find camera_pose_calibration)/blocks/calibration_tf_publisher.launch">

<arg name="cache_file" value="/some/path/kinect_extrinsics_cache.bag" />

</include>

</launch>The extrinsic publisher listens for the output of the optimization routine we're about to run, publishes the results to tf, and saves them to disk to be automatically loaded in the future.

As with the intrinsic calibration, make sure you get a clean (unspeckled) IR image. E.g. cover the IR projector with a Post-it.

Now follow the Running calibration instructions. The command will look something like:

# Replace the checkerboard dimensions and the /my_camera namespace roslaunch camera_pose_calibration calibrate_2_camera.launch camera1_ns:=/my_camera camera2_ns:=/camera/ir checker_rows:=4 checker_cols:=5 checker_size:=0.0249

Capture as many checkerboard poses as you like, but three is likely enough.

Creating color point clouds

openni.launch loads all of the depth_image_proc nodelets required to register the depth camera to the built-in RGB camera, if not using OpenNI's registration capability. Since we are adding a new camera to the system, we need to do a bit more work.

Fortunately we can reuse some of the machinery from openni_launch, which splits off the registration-related processing into its own launch file. Append the following to your launch file from before:

<launch>

...

<!-- Register depth to external RGB camera and create point clouds -->

<!-- Replace 'my_camera' with the correct namespace -->

<include file="$(find openni_launch)/launch/includes/depth_registered.launch">

<arg name="manager" value="/camera_nodelet_manager" />

<arg name="rgb" value="my_camera" />

<arg name="depth" value="camera/depth" />

<!-- New namespace 'my_camera_depth' contains the outputs -->

<arg name="depth_registered" value="my_camera_depth" />

<arg name="suffix" value="my_camera_depth" />

</include>

</launch>Make sure that OpenNI registration is disabled - uncheck the depth_registration box for /camera/driver using dynamic_reconfigure. Also make sure you have unblocked the IR projector - the Kinect won't see depth at all without it!

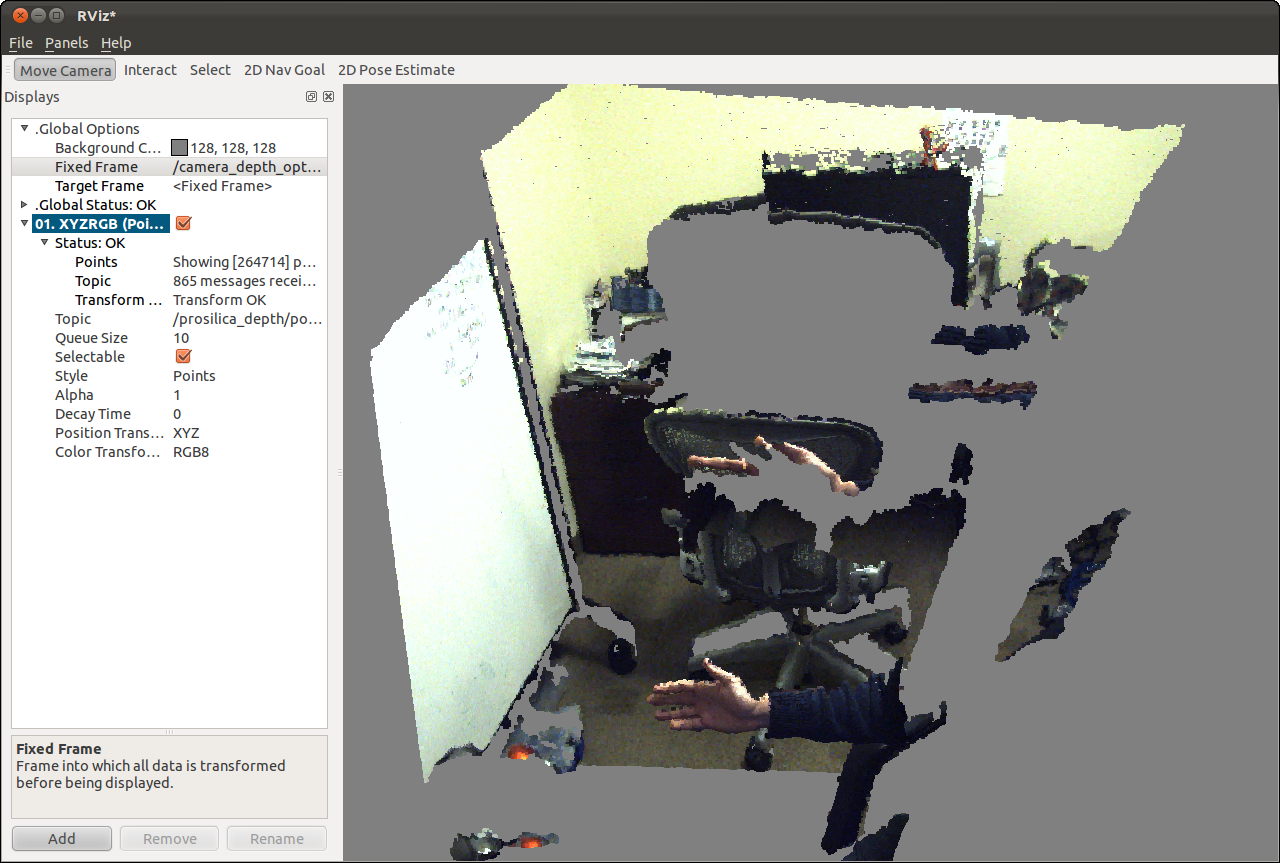

Now start rviz and open a PointCloud2 display for topic /my_camera_depth/points. Set the Fixed Frame to /camera_depth_optical_frame. You should see a lovely color point cloud. Cover the Kinect RGB camera with your thumb to verify it is not being used.

This screenshot was generated using the rig pictured at the top of the page, with the external camera mounted below the IR camera.

An unfortunate but instructive artifact is visible: the top of the hand is projected onto the back of the chair. This is because:

- That chair section is visible to the IR camera, but not to the external RGB camera. The hand blocks the RGB camera's view.

- The chair points are projected to where they would have appeared in the RGB image, which overlaps with the hand.

The depth_image_proc/register nodelet performs Z-buffering (prefers a nearer depth to a further one for the same RGB pixel), but...

- It fails here because the external camera is much higher resolution (5Mp) than the IR camera, allowing the further away chair points to "slip through the cracks" in the Z-buffering.

A nice project would be modifying depth_image_proc/register to densely interpolate the higher-resolution registered depth image.