| |

Kinect

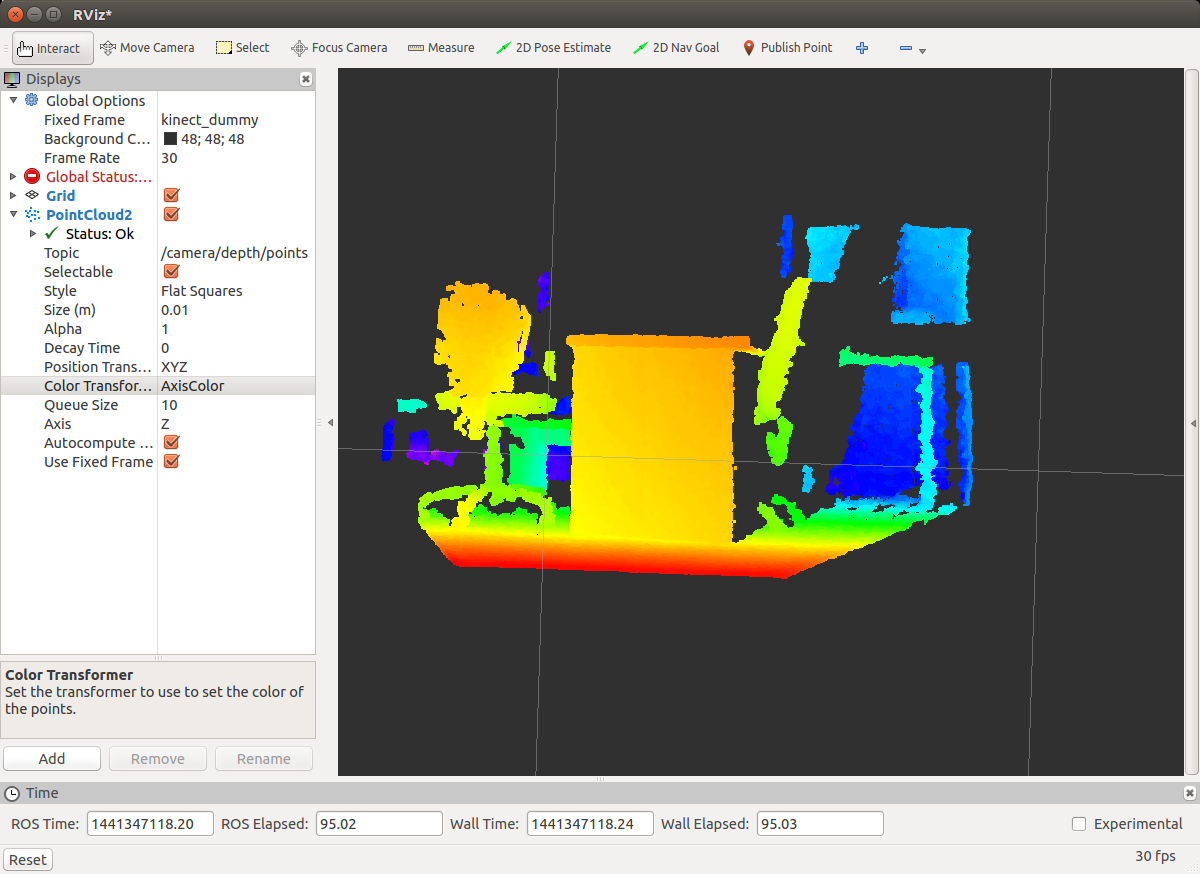

Description: Using Microsoft Kinect on the Evarobot.Tutorial Level: BEGINNER

Firstly, we will install OpenNI and Kinect driver.

Installing dependencies:

> sudo apt-get install g++ python libusb-1.0-0-dev freeglut3-dev > sudo apt-get install doxygen graphviz mono-complete > sudo apt-get install openjdk-7-jdk

Intalling OpenNI:

> git clone https://github.com/OpenNI/OpenNI.git > cd OpenNI > git checkout Unstable-1.5.4.0 > cd Platform/Linux/CreateRedist > sudo chmod +x RedistMaker > ./RedistMaker > cd ../Redist/OpenNI-Bin-Dev-Linux-[xxx] > sudo ./install.sh

Installing Kinect driver

> git clone git://github.com/ph4m/SensorKinect.git > cd SensorKinect/Platform/Linux/CreateRedist > sudo chmod +x RedistMaker > ./RedistMaker > cd ../Redist/Sensor-Bin-Linux-x64-v* > sudo ./install.sh

Now, we install openni_launch which includes launch files to open an OpenNI device and load all nodelets to convert raw depth/RGB/IR streams to depth images, disparity images, and (registered) point clouds.

> sudo apt-get install ros-indigo-openni-camera ros-indigo-openni-launch

To run Kinect on ROS:

> roslaunch openni_launch openni.launch

To visualise Kinect data

> rosrun rviz rviz

|