Only released in EOL distros:

Package Summary

Depth Enhanced Monocular Odometry (camera and lidar version)

- Author: Ji Zhang

- License: BSD

- Source: git https://github.com/jizhang-cmu/demo_lidar.git (branch: None)

Package Summary

Depth Enhanced Monocular Odometry (camera and lidar version)

- Author: Ji Zhang

- License: LGPL

- Source: git https://github.com/jizhang-cmu/demo_lidar.git (branch: None)

Package Summary

Depth Enhanced Monocular Odometry (camera and lidar version)

- Maintainer: Ji Zhang <zhangji AT cmu DOT edu>

- Author: Ji Zhang

- License: LGPL

- Source: git https://github.com/jizhang-cmu/demo_lidar.git (branch: hydro)

Package Summary

Depth Enhanced Monocular Odometry (camera and lidar version)

- Maintainer: Ji Zhang <zhangji AT cmu DOT edu>

- Author: Ji Zhang

- License: LGPL

- Source: git https://github.com/jizhang-cmu/demo_lidar.git (branch: indigo)

The code has been removed from the public domain. The technology is available as commercial produces from Kaarta.

Overview

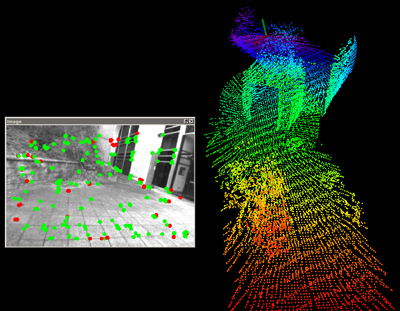

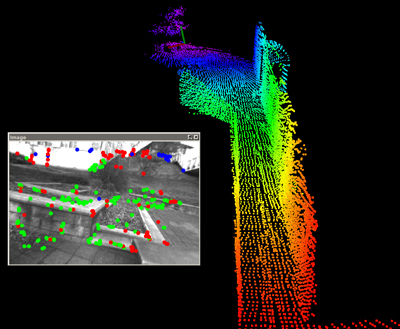

Depth Enhanced Monocular Odometry (Demo) is a monocular visual odometry method assisted by depth maps. The program contains three major threads running parallel. A "feature tracking" thread extracts and tracks Harris corners by Kanade Lucas Tomasi (KLT) feature tracker. A "visual odometry" thread computes frame to frame motion using the tracked features. Features associated with depth (either from the depth map or triangulated from previously estimated camera motion) are used to solve the 6DOF motion, and features without depth help solve orientation. A "bundle adjustment" thread refines the estimated motion. It processes sequences of images received within certain amount of time using Incremental Smoothing and Mapping (iSAM) open source library.

If an IMU is available, the orientation measurement (integrated from angular rate and acceleration) is used to correct roll and pitch drift, while the VO handles yaw and translation.

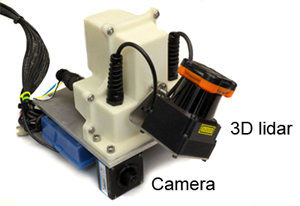

The program is tested on a laptop with 2.5 GHz quad cores and 6 Gib memory. It uses a camera and point cloud perceived by a 3D lidar (see following figure). Another version of the program using an RGBD camera is available here.

Usage

To run the program, users need to install packages required by the iSAM library, used by the bundle adjustment:

sudo apt-get install libsuitesparse-dev libeigen3-dev libsdl1.2-dev

Code can be downloaded from GitHub, or following the link on the top of this page. The program can be started by ROS launch file (available in the downloaded folder), which runs the VO and rivz:

roslaunch demo_lidar.launch

Datasets are available for download at the bottom of this page. Please make sure the data files are for the camera and lidar (not RGBD camera) version. With the program running (from the launch file), users can play the data file:

rosbag play data_file_name.bag

Note that if a slow computer is used, users can try to play the data file at a low speed, e.g. play the data file at half speed:

rosbag play data_file_name.bag -r 0.5

Datasets

NSH West no IMU(Video): visual odometry without using IMU

NSH West with IMU(Video): visual odometry assisted by IMU

Notes

Camera intrinsic parameters (K and D matrices) are defined in the "src/cameraParameters.h" file.

It is possible to accelerate feature tracking with a GPU. To do this, simply replace the "src/featureTracking.cpp" file with the "src/featureTracking_ocl.cpp" file and recompile. We use OpenCL to communicate with the GPU. Users first need to install OpenCL in a version no earlier than 1.1 with full profile, then install OpenCV using CMake with flag WITH_OPENCL=ON.

References

J. Zhang, M. Kaess, and S. Singh. Real-time Depth Enhanced Monocular Odometry. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). Chicago, IL, Sept. 2014.