Author: Job van Dieten <job.1994@gmail.com>

Maintainer: Jordi Pages <jordi.pages@pal-robotics.com>

Support: tiago-support@pal-robotics.com

Source: https://github.com/pal-robotics/tiago_tutorials.git

| |

Track Sequential (C++)

Description: A simple method to detect and track basic movements/shapes on a static camera against a static backgroundKeywords: OpenCV, image subtraction, ROS, PAL Robotics

Tutorial Level: BEGINNER

Next Tutorial: Corner Detection

Contents

Purpose

The purpose if the code is to give a demonstration of some basic operations within OpenCV, such as the absdiff, threshold & morphology and findcontour functions.

Pre-Requisites

First, make sure that the tutorials are properly installed along with the TIAGo simulation, as shown in the Tutorials Installation Section.

Execution

Open three consoles and source the catkin workspace of the public simulation

cd /tiago_public_ws/ source ./devel/setup.bash

In the first console launch the TIAGo robot in the Gazebo simulator using the following command

roslaunch tiago_gazebo tiago_gazebo.launch public_sim:=true end_effector:=pal-gripper world:=ball

TIAGo and a small ball floating in front of the robot will be spawned.

On the second terminal run the animate_ball node which will move the ball in circular motion in front of the robot

rosrun tiago_opencv_tutorial animate_ball.py

Finally, in the last console run the track_sequential node as follows

rosrun tiago_opencv_tutorial track_sequential

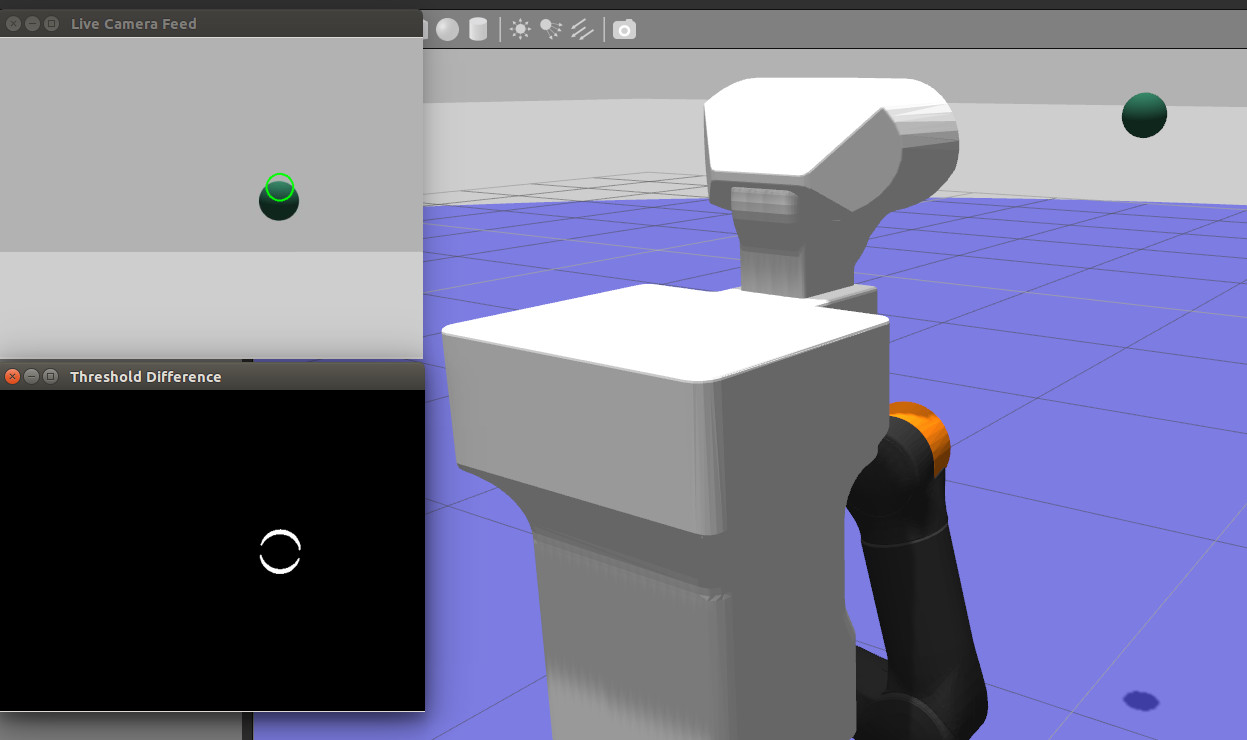

Two windows will appear (they may be on top of each other). The Live Camera Feed window shows the current image taken from the xtion/rgb/image_raw topic. The Threshold Difference window shows the difference between two sequential frames.

As can be seen, the ball motion will be detected in the Threshold Difference using consecutive image subtraction, while Live Camera Feed will show the ball position with a green circle.

Concepts & Code

There are three concepts used in this tutorial: absdiff, threshhold & morphology and findcontour.

absdiff()

The absdiff function takes in three arguments.

1 cv::absdiff(img1, img2, diff);

All three arguments are of type cv::Mat, which is the matrix container of the OpenCV Library. In the matrix the image from the camera topic is stored, and can be manipulated by OpenCV functions. absdiff() takes the absolute difference per element between two matrix containers. img1 is the current image, img2 is the image taken one frame before. All pixels of the containers that are the same intensity become 0 (visualized as black), the rest are given the intensity value of the absolute difference. These values are stored in the output matrix diff.

threshold & morphology

threshold()

To track this movement the contour class can be used. However for accuracy it helps to exaggerate the difference between the two matrices. This can be done using the threshold functions in the OpenCV library.

1 cv::threshold(diff, thresh, 20, 255, cv::THRESH_BINARY);

The binary threshold function checks the intensity of the pixels in the matrix diff, if the intensity value is below 20 it is set to 0, if it is above 20 the value is set to the max value, in this case 255. These intensity values are then stored in the thresh matrix.

morphology()

1 cv::morphologyEx(thresh, thresh, 2, cv::getStructuringElement( 2, cv::Size(3, 3)));

Morphology uses the erosion and dilation of basic shapes within a threshold image to affect the visualization. Morphology has several operation types, here the closing operation is used to fill in black spaces (noise). In this operation the output matrix is the same as the input matrix, namely thresh, the third parameter 2 is the morphological operation closing. The last parameter is the kernel to be used, which defines a shape and checks the matrix if any are located within. getStructuringElement() in this case returns an ellipse of size 3 by 3.

Using these two operations the difference between the current and previous frame are calculated and visualized in the Threshold Difference window.

findcontours()

1 cv::findContours(temp, contours, CV_RETR_EXTERNAL, CV_CHAIN_APPROX_SIMPLE);

This function takes in the threshold image, outputs the contours it has detected into an array of contours. In this case the contours are outlined by a series of points along the lines, and therefore the array of contours becomes an array of an array of points:

1 std::vector<std::vector<cv::Point> > contours;

The third parameter is the mode of, in this case CV_RETR_EXTERNAL means that the method will only find outer contours. The last parameter is the method for contour approximation. The CV_CHAIN_APPROX_SIMPLE approximates simple geometric shape into their corners, and only places a point at these extremities.

To display the circle at the center of the moving object, the contour found is bounded by a rectangle, and the center point of this is calculated. This point is then passed on as the center point of a circle, using the OpenCV circle() method.

1 std::vector<std::vector<cv::Point> > largest_contour;

2 largest_contour.push_back(contours.at(contours.size()-1));

3 objectBoundingRectangle = cv::boundingRect(largest_contour.at(0));

4 int x = objectBoundingRectangle.x+objectBoundingRectangle.width/2;

5 int y = objectBoundingRectangle.y+objectBoundingRectangle.height/2;

6 cv::circle(output_,cv::Point(x,y),20,cv::Scalar(0,255,0),2);

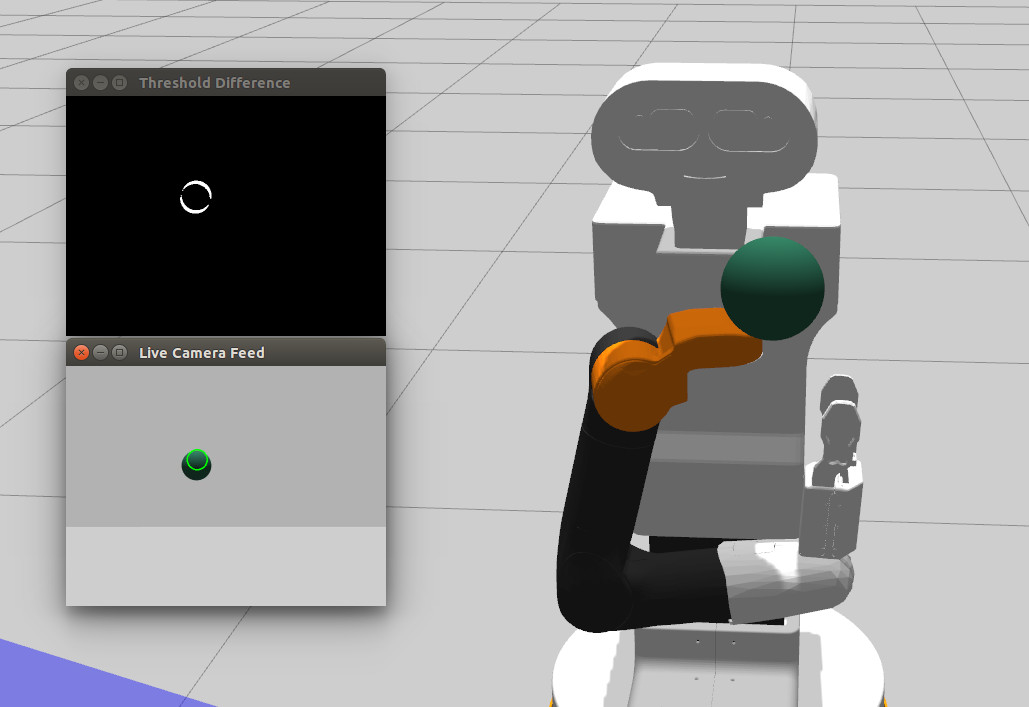

Example

This image was taken using the TIAGo camera in the PAL Robotics office. A ball was thrown in front of the camera.  The appearance of two balls on the Threshold window is due to the frame rate of the camera and the process of comparing the two frames. In the time it took to take the two frames the ball had moved from the lower position to the higher one, resulting in a difference of two locations.

The appearance of two balls on the Threshold window is due to the frame rate of the camera and the process of comparing the two frames. In the time it took to take the two frames the ball had moved from the lower position to the higher one, resulting in a difference of two locations.