Show EOL distros:

New in Diamondback

Only released in EOL distros:

Package Summary

A package that allows a remote user to request and assist with grasping and manipulation tasks, primarily using an rviz display.

- Author: Matei Ciocarlie

- License: BSD

- Repository: wg-ros-pkg

- Source: svn https://code.ros.org/svn/wg-ros-pkg/stacks/pr2_tabletop_manipulation_apps/tags/pr2_tabletop_manipulation_apps-0.4.5

Package Summary

A package that allows a remote user to request and assist with grasping and manipulation tasks, primarily using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Source: svn https://code.ros.org/svn/wg-ros-pkg/stacks/pr2_object_manipulation/branches/0.5-branch

pr2_object_manipulation: active_realtime_segmentation | fast_plane_detection | manipulation_worlds | object_recognition_gui | object_segmentation_gui | pick_and_place_demo_app | pr2_create_object_model | pr2_grasp_adjust | pr2_gripper_grasp_controller | pr2_gripper_grasp_planner_cluster | pr2_gripper_reactive_approach | pr2_gripper_sensor_action | pr2_gripper_sensor_controller | pr2_gripper_sensor_msgs | pr2_handy_tools | pr2_interactive_gripper_pose_action | pr2_interactive_manipulation | pr2_interactive_object_detection | pr2_manipulation_controllers | pr2_marker_control | pr2_navigation_controllers | pr2_object_manipulation_launch | pr2_object_manipulation_msgs | pr2_pick_and_place_demos | pr2_pick_and_place_tutorial | pr2_tabletop_manipulation_launch | pr2_wrappers | rgbd_assembler | robot_self_filter_color | segmented_clutter_grasp_planner | tabletop_collision_map_processing | tabletop_object_detector | tf_throttle

Package Summary

A package that allows a remote user to request and assist with grasping and manipulation tasks, primarily using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Source: svn https://code.ros.org/svn/wg-ros-pkg/stacks/pr2_object_manipulation/branches/0.6-branch

Package Summary

A package that allows a remote user to request and assist with grasping and manipulation tasks, primarily using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Source: git https://github.com/ros-interactive-manipulation/pr2_object_manipulation.git (branch: groovy-devel)

Contents

Overview

The PR2 interactive manipulation tool is designed to allow a remote user to execute grasping and placing tasks using a PR2 robot. It interfaces with the user via the rviz visualization engine. It is designed to make the task of picking up and placing an object without colliding with the environment as easy for the operator as possible.

There are two main components to executing a remote grasping or placing tasks.

- the PR2 Interactive Manipulation window, described here. This window allows the user to request the execution of the task and to acquire collision maps used for collision-free execution of the task. It also contains interface for a number of simple arm movement primitives, provided here for convenience. Think of it as "high-level mission control" for grasping and placing.

the Gripper Click interface, contained in the pr2_gripper_click package. This interface allows the operator to specify the desired grasp point in a scene. Think of it as "low-level information gathering" for grasping and placing.

In addition, you can use the pr2_interactive_object_detection tools to perform tasks such as object segmentation and recognition. Once an object is segmented or recognized, it is often the case that it can be picked up autonomously. The results of the pr2_interactive_object_detection tools will automatically show up in the interactive manipulation controls, as described below.

The documentation on this page will show you how to use these tools to execute remote grasping and placing tasks on the PR2.

Starting the PR2 Interactive Manipulation Tool

You will have to bring up the components of the PR2 Interactive Manipulation tool both on the robot you are teleoperating and on the desktop you are using.

On the robot

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch

On the desktop

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_desktop.launch

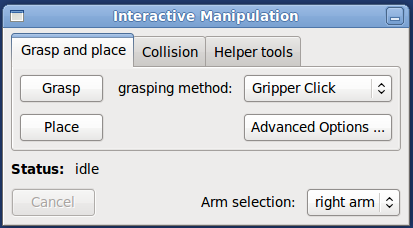

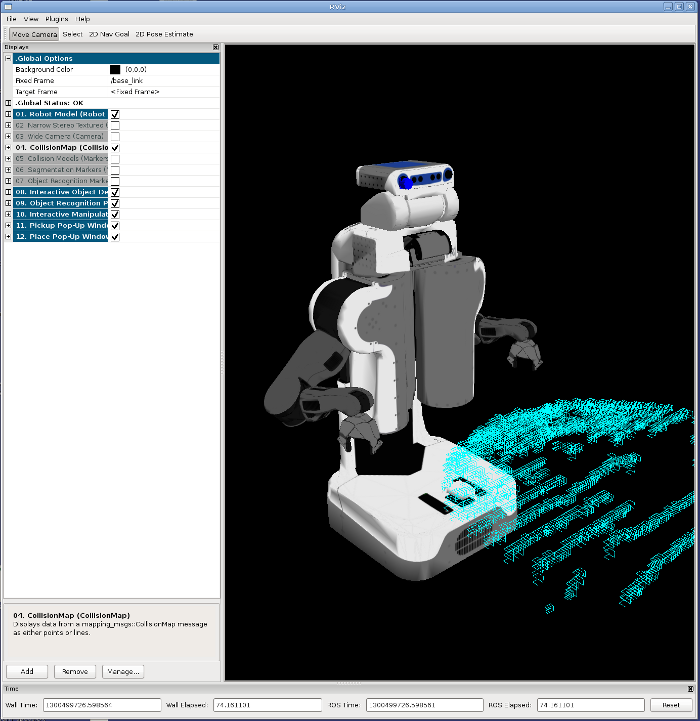

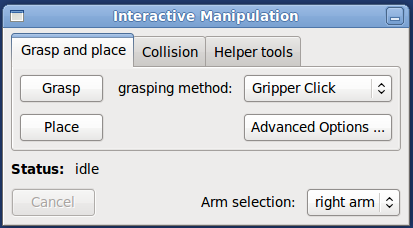

Note that this launch file will bring up rviz. The PR2 Interactive Manipulation tools are implemented as rviz plugins. In particular, you will see the Interactive manipulation dialog pop up:

The launch file above will also instantiate all the other rviz displays that you need, although some of them will be disabled on launch. For example, the launch file above will also make sure rviz also contains all the display types from the pr2_interactive_object_detection package.

If you do not use the launch file above, but simply start rviz and then create the displays yourself, the PR2 Interactive Manipulation window will show up and grasping and placing functionality will still work, but the convenience functions provided for arm movement will not.

Also on the desktop, you can optionally attach a PS3 joystick (not the one paired with the robot) directly via a USB cable. The launch files above will also bring up the standard PR2 joystick teleop node and make it listen to the joystick connected to your desktop.

Using the PR2 Interactive Manipulation Tool

General setup

Drive the robot within reach of the objects you are trying to manipulate. Note that base positioning is generally outside the scope of this package which is dedicated to manipulation. However, for convenience, the launch files above also start a joystick teleop package using the joystick plugged into your desktop computer.

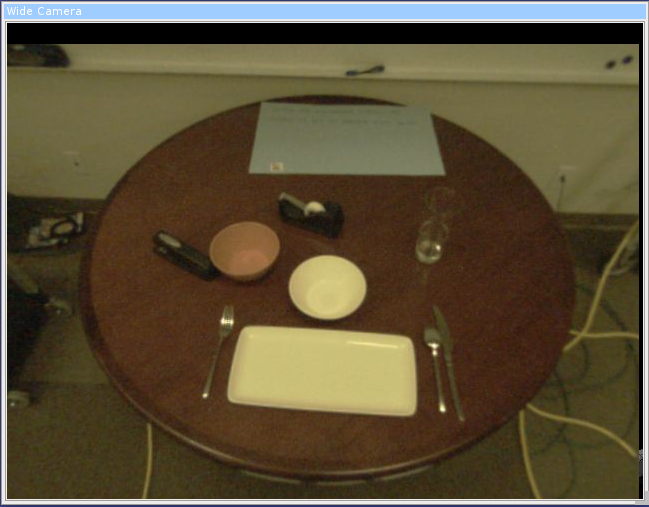

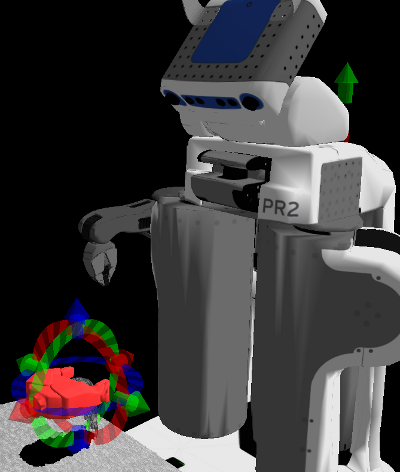

Here is an example of robot positioning, which was used to get the screenshots in this examples:

In general, the view from the robot's wide angle camera is very useful for remote operation. The interactive manipulation launch file will automatically create the rviz display to view it; make sure it is enabled so you get a video stream:

Collision map acquisition

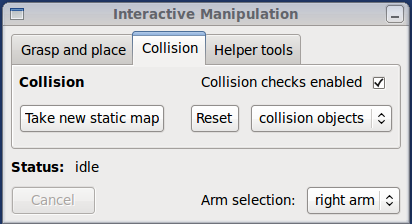

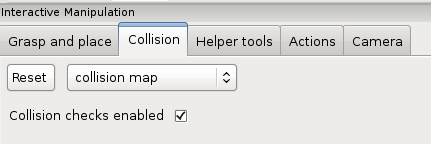

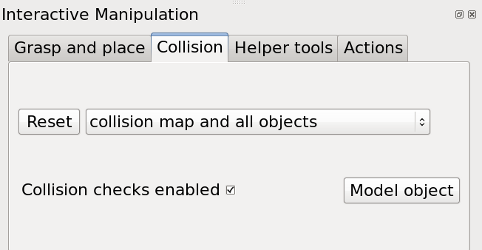

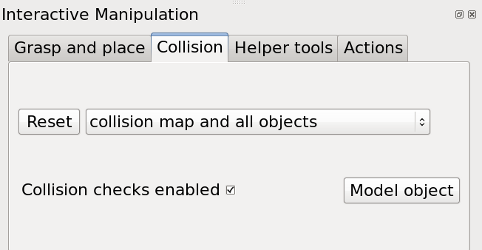

It is recommended to acquire a new collision map after you are done positioning the base of the robot for grasping, but before you attempt to grasp or place an object. Use the Collision tab of the Interactive Manipulation dialog:

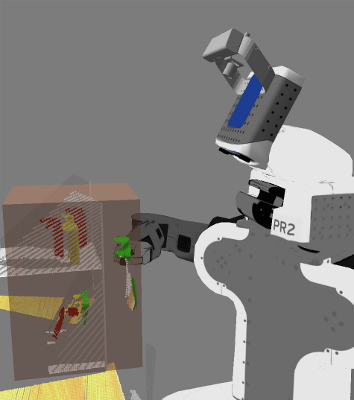

Simply click the "Take new static map" button and the robot will acquire a new map from the tilting laser. To see it in rviz, enable the Collision Map plugin (which should already be in your rviz displays list as the interactive manipulation launch file automatically loads it on startup). The map will show up as blue-ish boxes:

Note that:

- the arms of the robot might occlude parts of the environment

- occasionally, the robot sees its own body as an obstacle (when the self-filter fails to eliminate it from the scan). Such ghost obstacles will prevent arm motion, so it is recommended to get the arms out of the way (see section below on arm movement) and acquire a new map

Grasping and placing

Use the Grasp and place tab of the Interactive Manipulation dialog:

The grasping method drop down box offers two options:

gripper click is the default, and always available. This will allow you to manually choose a grasp point, using the tools described in the pr2_gripper_click package.

object selection is only available if an object in the scene has been either segmented or recognized, using the Interactive object detection dialog which calls the tools described in the pr2_interactive_object_detection package.

If you choose this option, you will only have to choose which object from the scene you want to grasp, and the robot will plan a grasp automatically.

Depending on which method above you choose, you will get a pop-up window requiring your input. Once the input is confirmed, the grasp execution should continue. If all goes well, the arm will move and pick up the object. If not, you should get an informative error message in the status area of the Interactive Manipulation window.

For placing, use the Place button. For now, the only selection for choosing a place location is the one described in the pr2_gripper_click package.

Arm movement

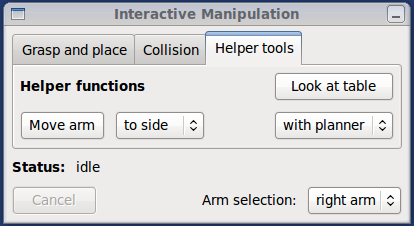

For convenience, the Interactive Manipulation tool also provides some arm movement functionality. Use the Helper tools tab of the Interactive manipulation dialog:

You can move the arms to a two pre-defined positions

- side position, great for taking collision maps and other sensor data with the arms out of the way. Bad for navigation, as the robot won't fit through doors or narrow corridors.

- front position, with the arms in front of the robot. Meant for driving around while holding an object.

You can move the arm to these positions in two ways:

- using motion planner: the robot will attempt to plan and execute a collision-free path to the destination. Uses the current collision map, acquired as described earlier. Note that occasionally the robot gets into states where the motion planner will not work (such as perceived collisions with the environment or joint angles beyond legal limits). If this happens, the the option below

open-loop: the robot will execute a pre-defined joint trajectory to get the arm to the desired position. No collision checking is performed, so the arm might hit the environment. This option is useful for getting the robot out of a stuck position that the planner will not plan out of.

Package Summary

A package that allows a remote user to teleoperate the PR2 to perform grasping/manipulation, navigation, and perception tasks, using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Repository: wg-ros-pkg

Contents

- Package Summary

- Overview

- Starting the PR2 Interactive Manipulation Tool

-

Using the PR2 Interactive Manipulation Tool

- Moving arms to the side/above/front

- Taking snapshots and positioning the base for manipulation

- Moving the torso up/down

- Grasping segmented objects

- Grasping recognized objects

- Manually specifying a grasp pose

- Real-time gripper control

- Posture control

- Opening and closing the gripper

- Placing

- Planned Moves

- Resetting collision representations

- Advanced Options

- Movies

Overview

The PR2 interactive manipulation interface is designed to allow a remote user to teleoperate a PR2 robot, with tools for manipulation, perception, and navigation that have varying levels of autonomous assistance. It interfaces with the user via the rviz visualization engine. It is designed to make the task of picking up and placing an object without colliding with the environment as easy for the operator as possible.

This tutorial also includes use of the tools contained in pr2_interactive_object_detection, which allow you to perform tasks such as object segmentation and recognition. Once an object is segmented or recognized, it is often the case that it can be picked up autonomously. The results of the pr2_interactive_object_detection tools will automatically show up in the interactive manipulation controls, as described below.

The documentation on this page will show you how to use these tools to execute remote grasping and placing tasks on the PR2.

Starting the PR2 Interactive Manipulation Tool

You will have to bring up the components of the PR2 Interactive Manipulation tool both on the robot you are teleoperating and on the desktop you are using.

On the robot

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch

Note that the default is to use a Kinect mounted on the top of the robot's head, with frames starting with /openni. For a description of some common launch args to do things like adding base navigation, using the narrow stereo instead of a Kinect, or changing the Kinect frames used, see pr2_interactive_manipulation/launch_args.

On the desktop

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_desktop.launch

Note that this launch file will bring up rviz. The PR2 Interactive Manipulation tools are implemented as rviz plugins. The launch file above will instantiate all the rviz displays that you need. For example, the launch file above will also make sure rviz also contains all the display types from the pr2_interactive_object_detection package.

If you do not use the launch file above, you can also simply start rviz and then create the displays yourself.

Also on the desktop, you can optionally attach a PS3 joystick (not the one paired with the robot) directly via a USB cable. The launch files above will also bring up the standard PR2 joystick teleop node and make it listen to the joystick connected to your desktop.

Using the PR2 Interactive Manipulation Tool

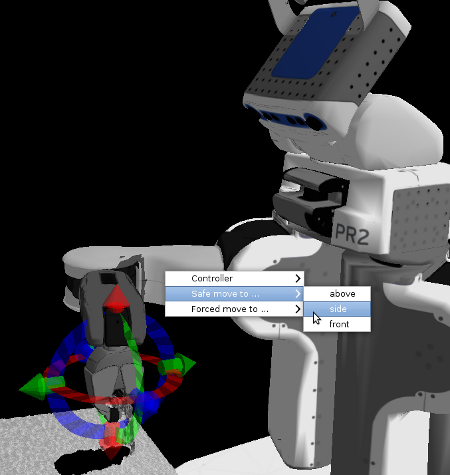

Moving arms to the side/above/front

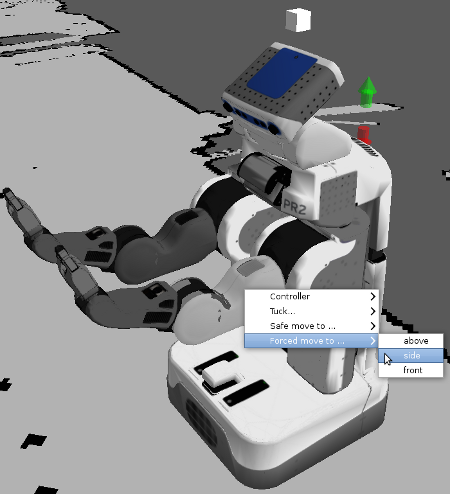

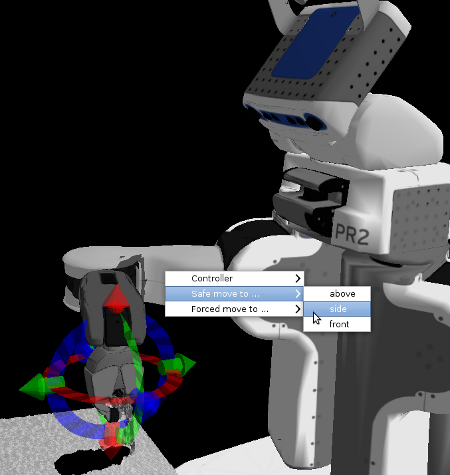

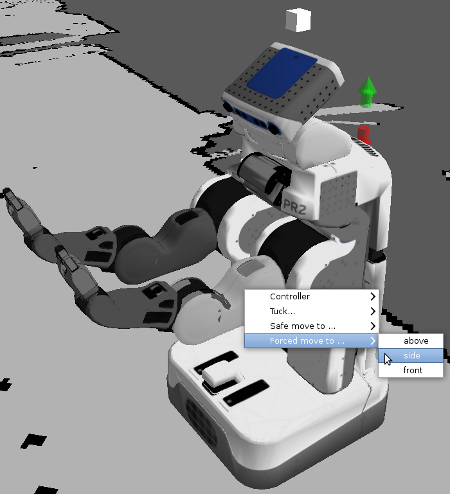

For starters, for most manipulation tasks we want the arms out of the way to start, so that we can have a clear view of what's going on. To get the arms to go off to the side, in rviz interact mode, right-click on the bicep of the arm you wish to move, and select either 'Safe move to ...' or 'Forced move to...', and then 'side', as shown below:

Safe move refers to trying to use the motion planners to move the arm; this is useful when the arm might hit something on its way to the side. If there is clearly nothing in the way of the arm getting to the side, selecting Forced move instead will send it to the side faster, but with no consideration of possible obstacles. (Forced move is also useful in times when the motion planners for whatever reason refuse to move the arm.)

You can also ask the arms to move to the 'above' or 'front' positions; experiment with those when the robot is in free-space to see what those positions look like. 'Front' is often useful for driving around while holding objects, although because the arms are not within the footprint of the robot, you should not use autonomous navigation while doing so.

Taking snapshots and positioning the base for manipulation

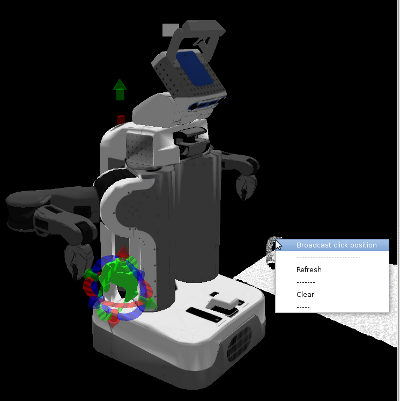

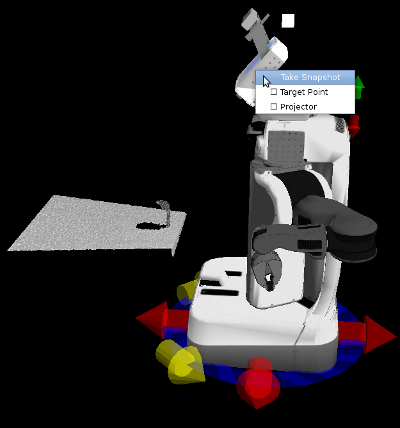

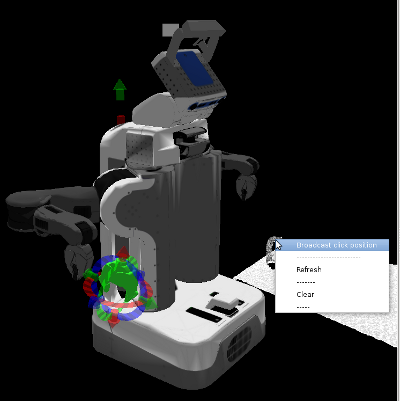

If you are trying to manipulate objects on a table, you typically want the base to be fairly close to the table, and in many cases, ideally, with the front edge of the base lined up with the edge of the table. To drive the base up to the table, it is usually good to take a snapshot with the stereo camera first, by right-clicking on the head and selecting 'Take Snapshot', as shown below:

The above picture also shows approximately what the scene looks like after you've used the manual/incremental base control arrows to drive up to the edge of the table, using your taken snapshot as a guide.

Moving the torso up/down

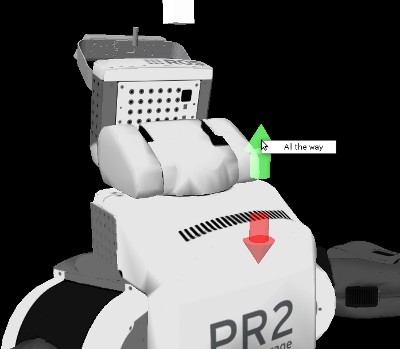

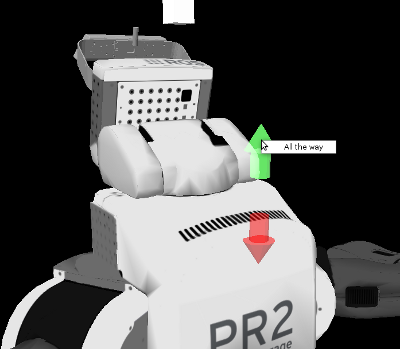

In real life, most tables are at a height where we need the torso to be all the way up in order to reach most objects. To move the torso up or down, use the arrows on the back of the PR2:

If you right-click either arrow, you can select 'All the way', which will cause the torso to continue all the way to the top or bottom position without you continuing to hold down the button. While it is moving all the way up or down, click the opposite arrow briefly to stop it in place.

In this simulation, however, the table happens to be fairly low, and so we can manipulate objects even with the torso down.

Grasping segmented objects

Now that we've driven up to the table and adjusted our torso height appropriately, we should take a new snapshot with the head (by either right-clicking on the head and selecting 'Take Snapshot' again, or by right-clicking on the point cloud and selecting 'Refresh'. This is because the robot's driving odometry is only so accurate, and so the old point cloud is likely to be not quite exactly in the right place relative to the robot.

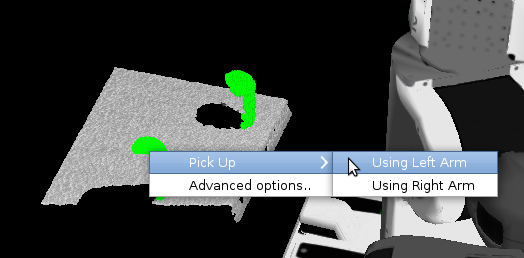

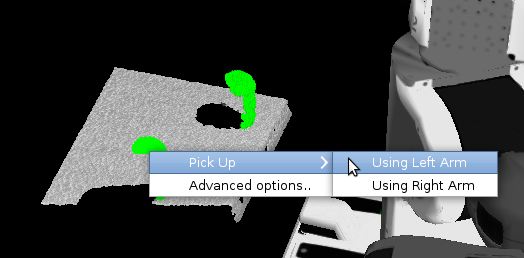

Once we have a new snapshot, if we are dealing with well-separated objects on a tabletop, we can use our segmentation algorithms to find object clusters to pick up autonomously. Click 'Segment' in the Interactive Object Detection window. If segments are found, it will report how many in the status bar of that window, and the segments will appear as green clusters. To pick up a green cluster, right-click on the desired cluster and select 'Pick Up' and the appropriate arm to use:

If the cluster planner successfully finds a non-colliding/feasible grasp from the top or side of the object, the robot will go ahead and execute that grasp.

Grasping recognized objects

If the object happens to be one in the object database (in this simulation, the soda cans are recognizable objects; the mug is not), after the Segment step, instead of grasping a cluster, you can additionally hit the 'Recognize' button. If the robot recognizes one of the segmented clusters as an object in its object database, it will replace the green cluster with a green object mesh for that object. You can also right-click on recognized objects and ask the robot to pick them up:

The advantage of doing so is that the robot has more varied grasps for recognized objects stored in its database, so it may be able to pick up some objects that the cluster planner may fail on.

Manually specifying a grasp pose

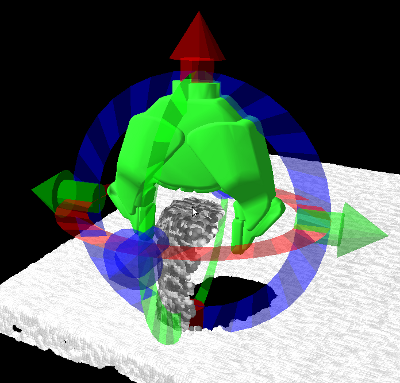

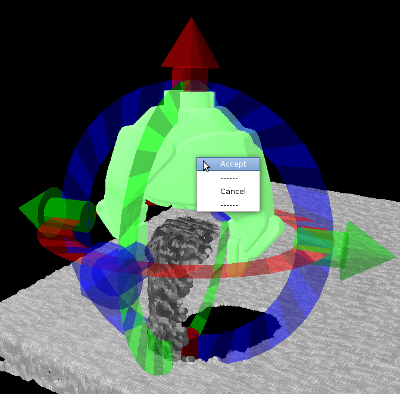

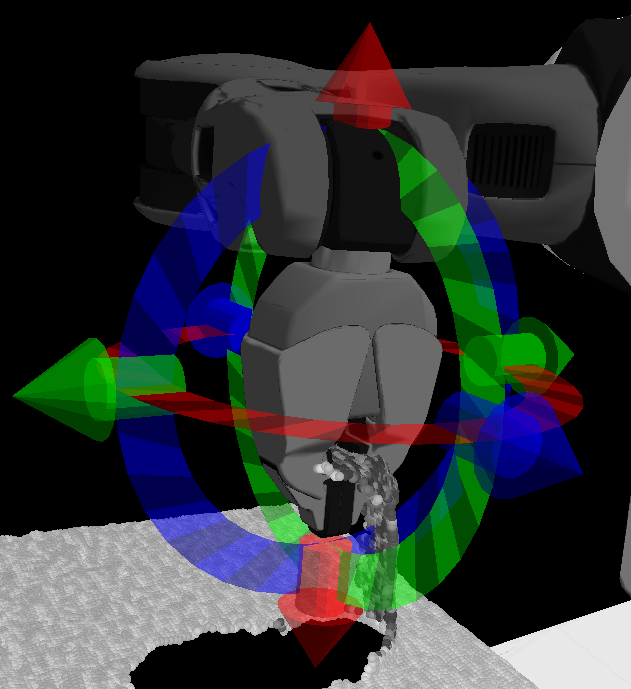

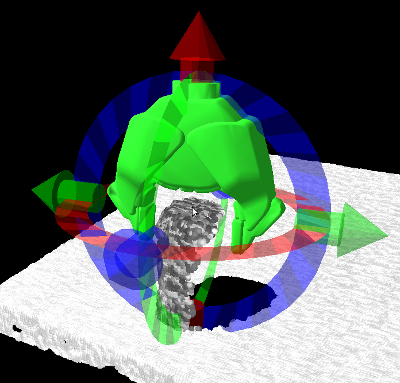

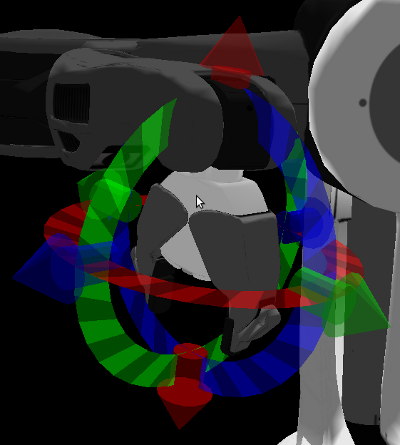

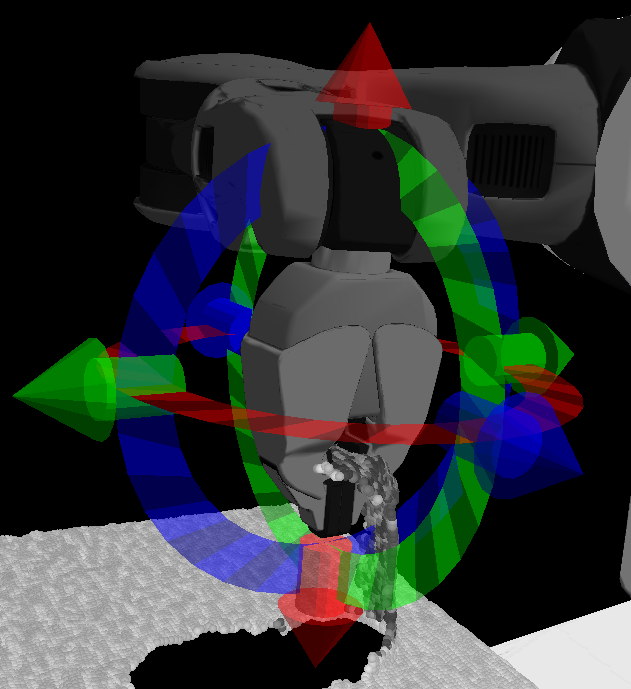

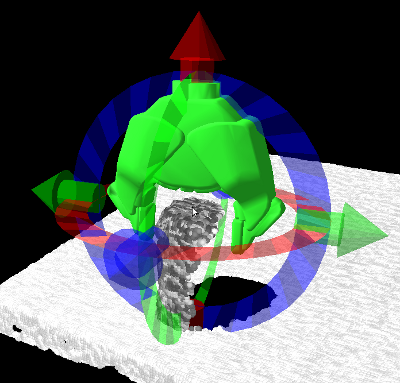

For objects in clutter, or for which the autonomous grasp planners fail, you can manually specify a grasp to execute. To do so, in the Interactive Manipulation window, select the arm you wish to use in the bottom right, and then click the 'Grasp' button. A disembodied representation of the gripper (that will hopefully turn green in a moment) should show up at the current gripper pose for that arm, surrounded by rings and arrows:

This disembodied gripper control can be used to specify a desired final grasp pose for the arm. Move the controls around by left-clicking and dragging the arrows to move back and forth along each axis, and rotate them around the three axes by left-click-dragging the three rings.

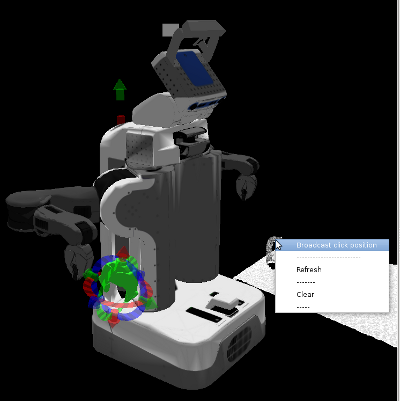

If you have taken a snapshot of the scene, you can quickly warp the gripper control over to a point on that snapshot by right-clicking on any point in the point cloud and selecting 'Broadcast click position'. When you do so, the robot estimates the normal direction at that point in the point cloud, and uses that to initialize the direction from which the gripper will try to grasp that point. If you wish to grasp the object from the side, select a point on the side; if you wish to grasp from the top, select a point on top. Normals can be noisy, so if the gripper does not appear to come from the correct direction, you can try again with a different point. Below is an example of the initialized pose (coming from the top) for a point selected on the top of a can:

When you warp the gripper to a broadcast point or let go of the gripper controls, the robot will check that pose to see if it is feasible for grasping. What that means is that it will back up that pose by 10 cm along the gripper's approach direction (which is always gripper-x, along the fingertips), to what we call the 'pregrasp' pose. The pregrasp pose needs to be entirely collision-free, both in terms of the gripper and in terms of where the arm thinks it needs to be to place the gripper there (the inverse kinematics solution for that pose). At the actual grasp pose, the gripper itself is allowed to collide with the environment, because you may want to shove other objects aside when moving along the approach from the pregrasp to the grasp; however, the forearm and the rest of the arm still needs to have a feasible, collision-free set of joint angles that will place the gripper there. It will further check that the gripper can lift along the desired lift direction, which defaults to up/vertical (see the 'Advanced Options' section below to change the lift direction).

If the grasp and pregrasp checks are all fine, the gripper will turn green. If there is a problem (either pose is out of reach or there would be a collision), it will turn red. For instance, the pose below:

is out of reach because the arm would have to come from all the way opposite the robot, which is not possible.

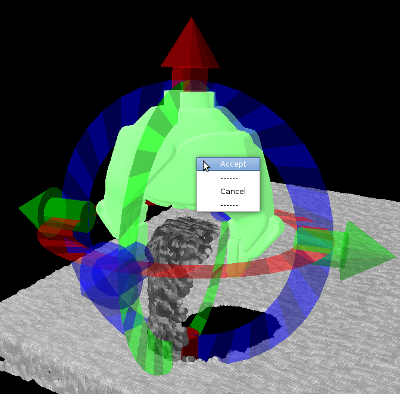

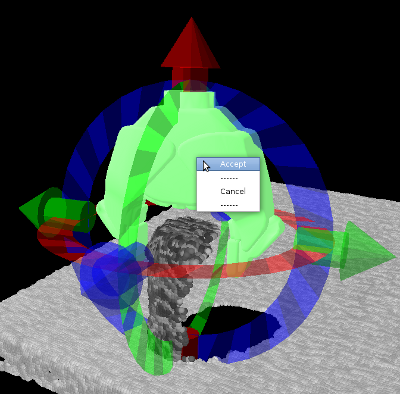

When the gripper is in an acceptable pose for grasping, and turns green to indicate that the robot thinks the pose is fine, you can right-click on the green gripper and select 'Accept' to ask the robot to execute the grasp, as shown below:

In simulation, after the robot closes the gripper around an object, it is often the case that the gripper controllers will continue to jitter because the simulation contacts are somewhat unstable; if this happens, the robot will not recognize that the grasp is complete. If the gripper closes on an object but does not lift it, and instead just sits there, you will have to lift the object manually using real-time gripper control, as described below. (If this happens, you would see a message that looks like "[ERROR] [1319857818.414735764, 239.056000000]: Hand posture controller timed out on goal" in the terminal window containing the robot manipulation launch.)

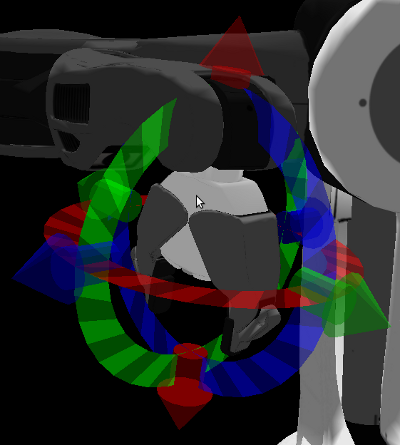

Real-time gripper control

Sometimes you want to do things besides just grasping; to move the gripper in real-time, left-click on the gripper's palm to activate real-time Cartesian control, as shown below:

Just as with the Grasp control, you can move the gripper around by left-click-dragging the rings and arrows. The difference here is that the gripper will move in real-time as you drag it around. Try to move smoothly and not too quickly; the controllers are set to allow fast movement, but when you move quickly the arm will tend to oscillate, and also Cartesian controllers are somewhat unpredictable, so the arm may not go where you expect. Left-click again on the gripper palm to make the Cartesian controls disappear.

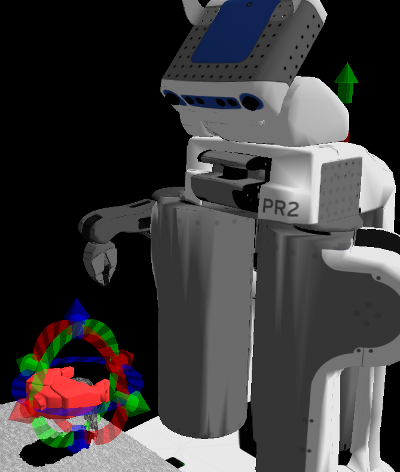

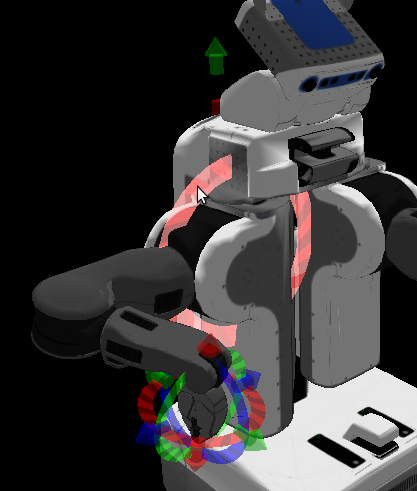

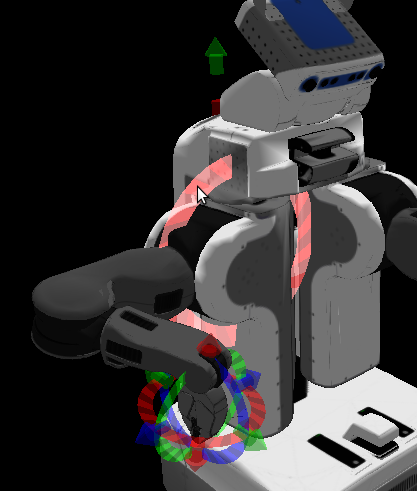

Posture control

If the elbow is in a posture that you do not like (for instance, if the elbow is down and the forearm is hitting the table), you can adjust the elbow posture using the posture ring. Left-click on the bicep of the robot to pull up the posture control, as shown below:

SLOWLY rotate the red ring to attempt to move the elbow up or down; too-fast movement can sometimes result in the robot flailing wildly. Because posture control right now is actually only trying to control one joint angle (the shoulder roll joint) while simultaneously attempting to maintain the current pose of the gripper, there are some poses/arm angle configurations for which the shoulder roll joint cannot actually rotate. If that happens, move the gripper to a different pose and try again.

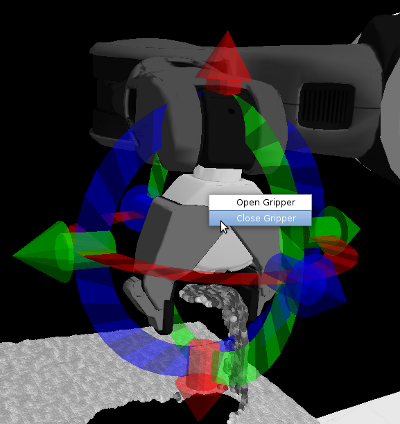

Opening and closing the gripper

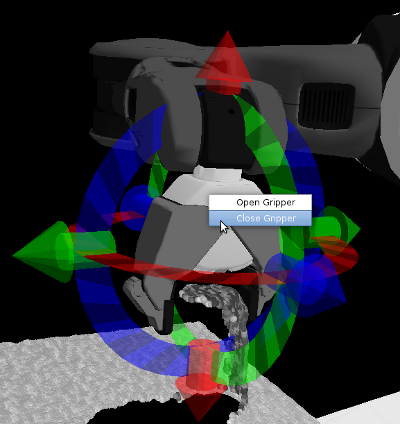

At any point, if you want to open or close a gripper, right-click on the gripper palm and select 'Open Gripper' or 'Close Gripper', as shown below:

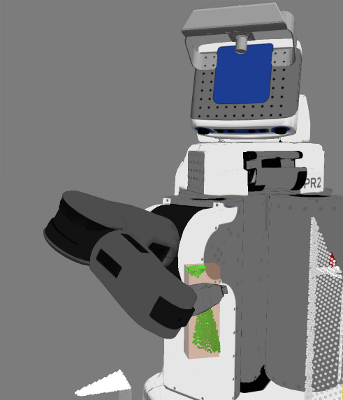

If you are trying to grasp an object, and you can't see what's going on through the robot's camera, you can often tell whether you successfully grasped by whether the gripper is closed all the way. For instance, this attempt at grasping a can failed, which I can tell because the gripper is closed:

If the grasp had succeeded, the gripper would still be partially open, since once you close, the gripper is attempting to close all the way; only the object prevents it from doing so.

After grasping, either successfully or not successfully, it is often good to move the arm back out of the way by asking it to perform a safe move to side, as shown below:

Once the arm is out of the way, you can take a new snapshot and see what the world looks like now.

Placing

Placing is much like Grasping; if you press the 'Place' button, you can manually specify a place pose to drop an object. If the robot thinks it has successfully grasped an object (which will not happen if the gripper controllers' jitter prevented a successful grasp+lift), the place control will additionally show either the recognized object mesh or the segmented object point cloud that the robot picked up, so that you can use it to visualize where it will go when placed.

The gripper will also turn red if the place pose is not feasible/in collision, and green if it is acceptable. Accepting a place pose will cause the robot to move to the pre-place location (which by default is above the place pose), then lower the object to the place location (and past, if reactive place is on; see Advanced Options below to change this), open the gripper, and retreat along the gripper-approach direction (in the direction of the fingertips/gripper-forward).

Planned Moves

Planned Moves are like Grasping and Placing, except that the robot does not do any pre-grasp, pre-place, lift, or retreat. A pose selected using the Planned Move button needs to be out of collision entirely for the pose to turn green. Upon accepting a planned move pose, the robot will use the motion planners to plan a path to that pose.

Resetting collision representations

When the robot attempts to motion plan (to a grasp, a place, a planned move, or to one of the fixed sets of angles like the side/front/above), it uses a collision representation taken by the tilting laser rangefinder. You can look at the current, built-up collision map by clicking on the PointCloud2 checkbox (of topic /octomap_point_cloud) or the Marker checkbox (of topic /occupied_cells) in the Displays window.

If people have been near/around the robot, or if objects have moved significantly in the scene, this collision map may have become crowded with points where there isn't actually anything. You can often tell when this happens because the robot will refuse to move to poses that it would normally be able to get to. When this happens, you need to reset the collision map, by clicking on the 'Collision' tab in the Interactive Manipulation panel. Change the drop-down to 'collision map' and press the Reset button:

If you picked up a recognized/segmented object and it fell out of the hand, the robot may not realize that the object is gone; when you picked it up, if the robot thought that a successful grasp happened, it will have attached a collision representation to the gripper to avoid hitting the environment with that object. To tell the robot explicitly that there is nothing in the hand, you can change the drop-down to 'all attached objects' (which gets rid of things in both grippers), or 'arm attached objects', which will only get rid of an object attached to the arm chosen in the bottom right of the Interactive Manipulation window.

Selecting 'All collision objects' and hitting Reset will remove explicit representations for any objects in the environment that the robot has segmented, recognized, or placed.

Advanced Options

You can change the desired behavior when executing grasps by clicking on the 'Options' button in the 'Grasp and place' tab of the Interactive Manipulation window.

'Reactive grasping' and 'Reactive transport' refers to using the tactile sensors to adjust a grasp once executed; this does not yet work in simulation.

'Reactive place' is also something that wants the tactile sensors; if you are not using the tactile sensors, the robot during a place action will move the object past the desired place pose, which may or may not be desired behavior. (When placing on a table, this can result in destroying place-actions that try to balance objects on other objects; uncheck this box to make the robot let go exactly where you asked it to place.)

After grasping an object, the robot performs a 'lift' move to get the object away from its supporting surface. The lift move defaults to always lifting up (vertically), but can be changed to lift 'along approach direction' by changing the drop-down menu. This is very useful when grasping out of shelves, since you usually want to back out the way you came in rather than always lifting objects up. Likewise, when placing, the robot approaches the place pose from either above (vertically) or along the gripper-forward direction (along approach direction).

You can also change the distance that the robot tries to lift an object after grasping ('Try to lift after grasp') or the distance that it tries to retreat the gripper after placing an object ('Try to retreat after place'), as well as the desired/minimum approach distances for a grasp. These parameters are useful to change in highly-constrained situations, or for grasping handles and the like, when you want the robot to close but not to move when it gets there.

Movies

Here is a movie showing grasping and placing in a simulated world.

And here is a movie showing the tools described above being used in the context of using the PR2 as an assistive robot.

Package Summary

A package that allows a remote user to teleoperate the PR2 to perform grasping/manipulation, navigation, and perception tasks, using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Repository: wg-ros-pkg

Contents

- Package Summary

- Overview

- Installation

- Starting the PR2 Interactive Manipulation Tool

-

Using the PR2 Interactive Manipulation Tool

- Moving arms to the side/above/front

- Taking snapshots and positioning the base for manipulation

- Moving the torso up/down

- Grasping segmented objects

- Grasping recognized objects

- Manually specifying a grasp pose

- Real-time gripper control

- Posture control

- Opening and closing the gripper

- Placing

- Planned Moves

- Modeling a point cloud object

- Resetting collision representations

- Advanced Options

- Base Navigation

- Movies

Overview

The PR2 interactive manipulation interface is designed to allow a remote user to teleoperate a PR2 robot, with tools for manipulation, perception, and navigation that have varying levels of autonomous assistance. It interfaces with the user via the rviz visualization engine. It is designed to make the task of picking up and placing an object without colliding with the environment as easy for the operator as possible.

This tutorial also includes use of the tools contained in pr2_interactive_object_detection, which allow you to perform tasks such as object segmentation and recognition. Once an object is segmented or recognized, it is often the case that it can be picked up autonomously. The results of the pr2_interactive_object_detection tools will automatically show up in the interactive manipulation controls, as described below.

The documentation on this page will show you how to use these tools to execute remote grasping and placing tasks on the PR2.

Installation

First install the pr2_interactive_manipulation suite of tools:

sudo apt-get install ros-fuerte-pr2-interactive-manipulation

Then set up the environment variables by sourcing this bash script:

source /opt/ros/fuerte/setup.bash

That will set the environment variables in your current shell only; if you want them to be loaded every time you start a new shell, put that command in your ~/.bashrc.

You should now have the roslaunch binary in your path.

Starting the PR2 Interactive Manipulation Tool

You can either run the PR2 Interactive Manipulation tool using a simulated robot, or by teleoperating a real robot. You will need to start up two separate components: one on the robot or running the simulator, and another component to control the robot and direct it to grasp or move.

Simulated robot

You need to start up the Gazebo simulation tool which models the world your robot operates in.

roslaunch manipulation_worlds pr2_table_object.launch

This should cause a "Gazebo" window to open up, displaying a PR2 robot in front of a table. You should be able to click and drag on the screen to move the viewpoint around. This window shows you what is happening in the simulated world.

Next you should launch the IM tool, connected to the simulator:

export ROBOT=sim roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch sim:=true nav:=true

No window will pop up after you run this command. The parameters on the command line tell it to use the simulator (sim:=true) and that you want to be able to navigate (nav:=true).

When it's ready, the last message should read "Tabletop complete node ready".

Then launch the RViz GUI, which allows you to interact graphically with your robot:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_desktop.launch sim:=true

This will open an RViz window where you can control your robot.

Teleoperating a real robot

You will have to bring up the components of the PR2 Interactive Manipulation tool both on the robot you are teleoperating and on the desktop you are using.

On the robot

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch

Note that the default is to use a Kinect mounted on the top of the robot's head, with frames starting with /openni. For a description of some common launch args to do things like adding base navigation, using the narrow stereo instead of a Kinect, or changing the Kinect frames used, see pr2_interactive_manipulation/launch_args.

On the desktop

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_desktop.launch

Note that this launch file will bring up rviz. The PR2 Interactive Manipulation tools are implemented as rviz plugins. The launch file above will instantiate all the rviz displays that you need. For example, the launch file above will also make sure rviz also contains all the display types from the pr2_interactive_object_detection package.

If you do not use the launch file above, you can also simply start rviz and then create the displays yourself.

Also on the desktop, you can optionally attach a PS3 joystick (not the one paired with the robot) directly via a USB cable. The launch files above will also bring up the standard PR2 joystick teleop node and make it listen to the joystick connected to your desktop.

Using the PR2 Interactive Manipulation Tool

Moving arms to the side/above/front

For starters, for most manipulation tasks we want the arms out of the way to start, so that we can have a clear view of what's going on. To get the arms to go off to the side, in rviz interact mode, right-click on the bicep of the arm you wish to move, and select either 'Safe move to ...' or 'Forced move to...', and then 'side', as shown below:

Safe move refers to trying to use the motion planners to move the arm; this is useful when the arm might hit something on its way to the side. If there is clearly nothing in the way of the arm getting to the side, selecting Forced move instead will send it to the side faster, but with no consideration of possible obstacles. (Forced move is also useful in times when the motion planners for whatever reason refuse to move the arm.)

You can also ask the arms to move to the 'above' or 'front' positions; experiment with those when the robot is in free-space to see what those positions look like. 'Front' is often useful for driving around while holding objects, although because the arms are not within the footprint of the robot, you should not use autonomous navigation while doing so.

Taking snapshots and positioning the base for manipulation

If you are trying to manipulate objects on a table, you typically want the base to be fairly close to the table, and in many cases, ideally, with the front edge of the base lined up with the edge of the table. To drive the base up to the table, it is usually good to take a snapshot with the stereo camera first, by right-clicking on the head and selecting 'Take Snapshot', as shown below:

The above picture also shows approximately what the scene looks like after you've used the manual/incremental base control arrows to drive up to the edge of the table, using your taken snapshot as a guide.

Moving the torso up/down

In real life, most tables are at a height where we need the torso to be all the way up in order to reach most objects. To move the torso up or down, use the arrows on the back of the PR2:

If you right-click either arrow, you can select 'All the way', which will cause the torso to continue all the way to the top or bottom position without you continuing to hold down the button. While it is moving all the way up or down, click the opposite arrow briefly to stop it in place.

In this simulation, however, the table happens to be fairly low, and so we can manipulate objects even with the torso down.

Grasping segmented objects

Now that we've driven up to the table and adjusted our torso height appropriately, we should take a new snapshot with the head (by either right-clicking on the head and selecting 'Take Snapshot' again, or by right-clicking on the point cloud and selecting 'Refresh'. This is because the robot's driving odometry is only so accurate, and so the old point cloud is likely to be not quite exactly in the right place relative to the robot.

Once we have a new snapshot, if we are dealing with well-separated objects on a tabletop, we can use our segmentation algorithms to find object clusters to pick up autonomously. Click 'Segment' in the Interactive Object Detection window. If segments are found, it will report how many in the status bar of that window, and the segments will appear as green clusters. To pick up a green cluster, right-click on the desired cluster and select 'Pick Up' and the appropriate arm to use:

If the cluster planner successfully finds a non-colliding/feasible grasp from the top or side of the object, the robot will go ahead and execute that grasp.

Grasping recognized objects

If the object happens to be one in the object database (in this simulation, the soda cans are recognizable objects; the mug is not), after the Segment step, instead of grasping a cluster, you can additionally hit the 'Recognize' button. If the robot recognizes one of the segmented clusters as an object in its object database, it will replace the green cluster with a green object mesh for that object. You can also right-click on recognized objects and ask the robot to pick them up:

The advantage of doing so is that the robot has more varied grasps for recognized objects stored in its database, so it may be able to pick up some objects that the cluster planner may fail on.

Manually specifying a grasp pose

For objects in clutter, or for which the autonomous grasp planners fail, you can manually specify a grasp to execute. To do so, in the Interactive Manipulation window, select the arm you wish to use in the bottom right, and then click the 'Grasp' button. A disembodied representation of the gripper (that will hopefully turn green in a moment) should show up at the current gripper pose for that arm, surrounded by rings and arrows:

This disembodied gripper control can be used to specify a desired final grasp pose for the arm. Move the controls around by left-clicking and dragging the arrows to move back and forth along each axis, and rotate them around the three axes by left-click-dragging the three rings.

If you have taken a snapshot of the scene, you can quickly warp the gripper control over to a point on that snapshot by right-clicking on any point in the point cloud and selecting 'Broadcast click position'. When you do so, the robot estimates the normal direction at that point in the point cloud, and uses that to initialize the direction from which the gripper will try to grasp that point. If you wish to grasp the object from the side, select a point on the side; if you wish to grasp from the top, select a point on top. Normals can be noisy, so if the gripper does not appear to come from the correct direction, you can try again with a different point. Below is an example of the initialized pose (coming from the top) for a point selected on the top of a can:

When you warp the gripper to a broadcast point or let go of the gripper controls, the robot will check that pose to see if it is feasible for grasping. What that means is that it will back up that pose by 10 cm along the gripper's approach direction (which is always gripper-x, along the fingertips), to what we call the 'pregrasp' pose. The pregrasp pose needs to be entirely collision-free, both in terms of the gripper and in terms of where the arm thinks it needs to be to place the gripper there (the inverse kinematics solution for that pose). At the actual grasp pose, the gripper itself is allowed to collide with the environment, because you may want to shove other objects aside when moving along the approach from the pregrasp to the grasp; however, the forearm and the rest of the arm still needs to have a feasible, collision-free set of joint angles that will place the gripper there. It will further check that the gripper can lift along the desired lift direction, which defaults to up/vertical (see the 'Advanced Options' section below to change the lift direction).

If the grasp and pregrasp checks are all fine, the gripper will turn green. If there is a problem (either pose is out of reach or there would be a collision), it will turn red. For instance, the pose below:

is out of reach because the arm would have to come from all the way opposite the robot, which is not possible.

When the gripper is in an acceptable pose for grasping, and turns green to indicate that the robot thinks the pose is fine, you can right-click on the green gripper and select 'Accept' to ask the robot to execute the grasp, as shown below:

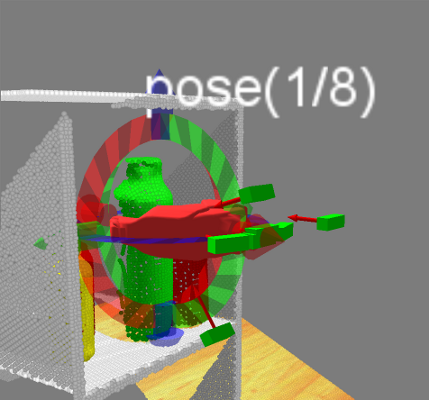

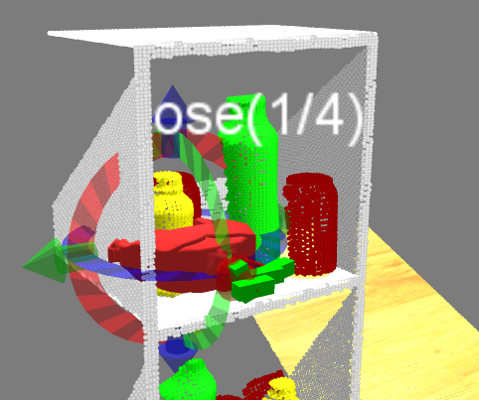

If the 'Find alternate poses' box in the Advanced Options menu is checked (which it is by default; uncheck the box to disable this feature), and the current pose is not acceptable (red), the robot will automatically find alternate, nearby poses that are acceptable. This looks something like this:

The pose(1/8) text is an interactive button that, when clicked, will cycle through the feasible poses found, or you can click on one of the green boxes with arrows that represent individual gripper poses to select it.

If no boxes appear, that means that no feasible poses are found nearby. Usually this happens when you are trying to grasp something far out of reach; try pressing the 'Draw Reachable Zones' button in the Grasp and place tab of the Interactive Manipulation menu to draw circles around the robot's shoulders whose radius is the length of the robot's arm (to the wrist). If the object in question is outside the circle when viewed overhead (the Camera Focus menu's Top button is useful for this), the robot will not be able to perform a top grasp on the object. (Side grasps can be performed on objects just barely outside the circle.)

For objects within reach, you can also ask the robot to provide grasp suggestions. After hitting the 'Grasp' button and then doing a 'Broadcast click position' on a point in the point cloud snapshot, you can right-click on the virtual gripper and select 'Run Grasp Planner' to ask the grasp planner to suggest possible gripper poses for grasping the objects seen in the point cloud from the top or front. This looks something like this:

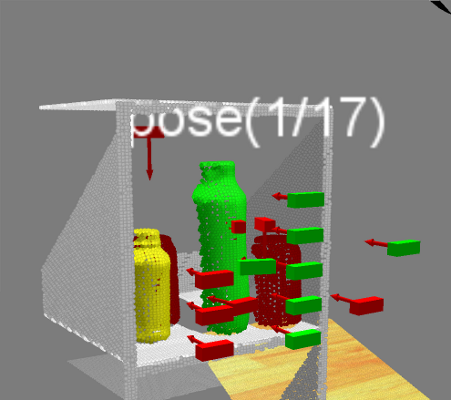

Again, click on the pose(1/17) button or left-click one of the boxes to select an appropriate gripper pose to grasp an object in the scene. Green boxes represent feasible grasps; red boxes represent grasps returned by the planner that tested as unfeasible. If you wish to grasp an object for which there only exist red boxes, clicking on one of them will trigger alternatives-finding. For instance, clicking on one of the red poses corresponding to grasping the yellow bottle on the shelf above results in something like the following:

at which point you can select one of the feasible poses and see if it will suffice to grasp the object you want. You can also switch to a mode in which doing a 'Broadcast click position' on a point in the point cloud always runs the grasp planner, by going to the Advanced Options menu and checking 'Always plan grasps'.

In simulation, after the robot closes the gripper around an object, it is sometimes the case that the gripper controllers will continue to jitter because the simulation contacts are somewhat unstable; if this happens, the robot will not recognize that the grasp is complete. If the gripper closes on an object but does not lift it, and instead just sits there, you will have to lift the object manually using real-time gripper control, as described below. (If this happens, you would see a message that looks like "[ERROR] [1319857818.414735764, 239.056000000]: Hand posture controller timed out on goal" in the terminal window containing the robot manipulation launch.)

Real-time gripper control

Sometimes you want to do things besides just grasping; to move the gripper in real-time, left-click on the gripper's palm to activate real-time Cartesian control, as shown below:

Just as with the Grasp control, you can move the gripper around by left-click-dragging the rings and arrows. The difference here is that the gripper will move in real-time as you drag it around. Try to move smoothly and not too quickly; the controllers are set to allow fast movement, but when you move quickly the arm will tend to oscillate, and also Cartesian controllers are somewhat unpredictable, so the arm may not go where you expect. Left-click again on the gripper palm to make the Cartesian controls disappear.

Posture control

If the elbow is in a posture that you do not like (for instance, if the elbow is down and the forearm is hitting the table), you can adjust the elbow posture using the posture ring. Left-click on the bicep of the robot to pull up the posture control, as shown below:

SLOWLY rotate the red ring to attempt to move the elbow up or down; too-fast movement can sometimes result in the robot flailing wildly. Because posture control right now is actually only trying to control one joint angle (the shoulder roll joint) while simultaneously attempting to maintain the current pose of the gripper, there are some poses/arm angle configurations for which the shoulder roll joint cannot actually rotate. If that happens, move the gripper to a different pose and try again.

Opening and closing the gripper

At any point, if you want to open or close a gripper, right-click on the gripper palm and select 'Open Gripper' or 'Close Gripper', as shown below:

If you are trying to grasp an object, and you can't see what's going on through the robot's camera, you can often tell whether you successfully grasped by whether the gripper is closed all the way. For instance, this attempt at grasping a can failed, which I can tell because the gripper is closed:

If the grasp had succeeded, the gripper would still be partially open, since once you close, the gripper is attempting to close all the way; only the object prevents it from doing so.

After grasping, either successfully or not successfully, it is often good to move the arm back out of the way by asking it to perform a safe move to side, as shown below:

Once the arm is out of the way, you can take a new snapshot and see what the world looks like now.

Placing

Placing is much like Grasping; if you press the 'Place' button, you can manually specify a place pose to drop an object. If the robot thinks it has successfully grasped an object (which will not happen if the gripper controllers' jitter prevented a successful grasp+lift), the place control will additionally show either the recognized object mesh or the segmented object point cloud that the robot picked up, so that you can use it to visualize where it will go when placed.

The gripper will also turn red if the place pose is not feasible/in collision, and green if it is acceptable. Accepting a place pose will cause the robot to move to the pre-place location (which by default is above the place pose), then lower the object to the place location (and past, if reactive place is on; see Advanced Options below to change this), open the gripper, and retreat along the gripper-approach direction (in the direction of the fingertips/gripper-forward).

As with Grasp, if the gripper turns red and the 'Find alternate poses' box is checked in the Advanced Options menu (which it is by default), alternate place poses will be suggested, and you can select one by cycling through with the Poses button or by left-clicking on one of the green boxes-with-arrows.

Planned Moves

Planned Moves are like Grasping and Placing, except that the robot does not do any pre-grasp, pre-place, lift, or retreat. A pose selected using the Planned Move button needs to be out of collision entirely for the pose to turn green. Upon accepting a planned move pose, the robot will use the motion planners to plan a path to that pose.

Modeling a point cloud object

If you have used the Grasp button to pick up an object seen in the point cloud snapshot (that was not previously segmented or recognized), the robot will not know what it is holding. If you then try to move to side, the robot is likely to thwack the environment with the object held by the robot. To give the robot an idea of what it is holding, you can ask the robot to create a crude (bounding box) representation of the object that gets attached to the gripper for motion planning purposes. To do so, first you must manually move the object using the Cartesian rings-and-arrows control to a pose where it is not near any other objects, and where the robot can see enough of the object to get a decent bounding box from a single point cloud snapshot. Note that the Kinect has a minimum distance of about half a meter, so you have to keep the object a fair distance away from the head. Refresh the point cloud to make sure the robot can see all of two sides of the object, then click 'Model object' in the Collision tab of the Interactive Manipulation window:

If the object is too close to point cloud points that don't belong to the object in hand, you will see a brown box appear that is much too large. For instance:

If that happens, you must move the object farther away.

A proper model looks something like this:

The box shown is now attached to the gripper, and the robot can motion plan properly to place the object, or to move it around without hitting things in the world.

Resetting collision representations

When the robot attempts to motion plan (to a grasp, a place, a planned move, or to one of the fixed sets of angles like the side/front/above), it uses a collision representation taken by the tilting laser rangefinder. You can look at the current, built-up collision map by clicking on the PointCloud2 checkbox (of topic /octomap_point_cloud) or the Marker checkbox (of topic /occupied_cells) in the Displays window.

If people have been near/around the robot, or if objects have moved significantly in the scene, this collision map may have become crowded with points where there isn't actually anything. You can often tell when this happens because the robot will refuse to move to poses that it would normally be able to get to. When this happens, you need to reset the collision map, by clicking on the 'Collision' tab in the Interactive Manipulation panel. Change the drop-down to either 'collision map' (if your robot is holding something and you don't want it to forget about the object it's holding) or 'collision map and all objects' (if it's not holding anything), and press the Reset button:

If you picked up a recognized/segmented object or modeled an object in the gripper, and it then fell out of the hand, the robot may not realize that the object is gone; when you picked it up, if the robot thought that a successful grasp happened, it will have attached a collision representation to the gripper to avoid hitting the environment with that object. To tell the robot explicitly that there is nothing in the hand (without also resetting the collision map), you can change the drop-down to 'all attached objects' (which gets rid of things in both grippers), or 'arm attached objects', which will only get rid of an object attached to the arm chosen in the bottom right of the Interactive Manipulation window.

Selecting 'All collision objects' and hitting Reset will remove explicit representations for any objects in the environment that the robot has segmented, recognized, or placed.

Advanced Options

You can change the desired behavior when executing/selecting grasps/places/planned moves by clicking on the 'Options' button in the 'Grasp and place' tab of the Interactive Manipulation window.

'Reactive grasping' and 'Reactive transport' refers to using the tactile sensors to adjust a grasp once executed; this does not yet work in simulation.

'Reactive place' is also something that wants the tactile sensors; if you are not using the tactile sensors, the robot during a place action will move the object past the desired place pose, which may or may not be desired behavior. (When placing on a table, this can result in destroying place-actions that try to balance objects on other objects; uncheck this box to make the robot let go exactly where you asked it to place.)

After grasping an object, the robot performs a 'lift' move to get the object away from its supporting surface. The lift move defaults to always lifting up (vertically), but can be changed to lift 'along approach direction' by changing the drop-down menu. This is very useful when grasping out of shelves, since you usually want to back out the way you came in rather than always lifting objects up. Likewise, when placing, the robot approaches the place pose from either above (vertically) or along the gripper-forward direction (along approach direction).

You can also change the distance that the robot tries to lift an object after grasping ('Try to lift after grasp') or the distance that it tries to retreat the gripper after placing an object ('Try to retreat after place'), as well as the desired/minimum approach distances for a grasp. These parameters are useful to change in highly-constrained situations, or for grasping handles and the like, when you want the robot to close but not to move when it gets there.

You can also switch off automatically finding alternate poses in Grasp, Place, and Planned Move modes by unchecking 'Find alternate poses'.

If you want the robot to always suggest grasp poses when you select 'Broadcast Click Position' on a snapshot point, check 'Always plan grasps'.

Finally, if you want to be able to adjust the pre-grasp gripper opening in Grasp mode (cycle through desired gripper openings by left-clicking on the virtual gripper), check 'Cycle gripper openings'. This is easy to do accidentally without noticing, and is thus usually better left unchecked until needed.

Base Navigation

is covered (mostly) on this page: robhum/navigation. You can also right-click on the skirt of the base to use the interactive markers to either navigate (using collision avoidance and planning) or to move directly to (no collision avoidance, will charge through things) a chosen base position. If you want to also use the standard rviz navigate-to button/arrow, BE CAREFUL! The rviz button doesn't know about controller switching, which is how we switch from navigate-to to directly-move-to. If you last did a direct-move-to, the rviz button will also directly-move-to, potentially charging through things. We recommend only using the interactive marker to specify navigation goals.

Another handy tool for navigating is the 'Clear costmaps' menu item, which can also be gotten to by right-clicking on the skirt of the base. This will get rid of temporary/spurious collision map cells left by people walking by and the like.

Movies

Here is a movie showing grasping and placing in a simulated world.

And here is a movie showing the tools described above being used in the context of using the PR2 as an assistive robot.

Package Summary

A package that allows a remote user to teleoperate the PR2 to perform grasping/manipulation, navigation, and perception tasks, using an rviz display.

- Author: Matei Ciocarlie, Kaijen Hsiao, Adam Leeper

- License: BSD

- Repository: wg-ros-pkg

Source: git https://github.com/ros-interactive-manipulation/pr2_object_manipulation.git

Contents

- Package Summary

- Overview

- Installation

- Starting the PR2 Interactive Manipulation Tool

-

Using the PR2 Interactive Manipulation Tool

- Moving arms to the side/above/front

- Taking snapshots and positioning the base for manipulation

- Moving the torso up/down

- Grasping segmented objects

- Grasping recognized objects

- Manually specifying a grasp pose

- Real-time gripper control

- Posture control

- Opening and closing the gripper

- Placing

- Planned Moves

- Modeling a point cloud object

- Resetting collision representations

- Advanced Options

- Base Navigation

- Movies

Overview

The PR2 interactive manipulation interface is designed to allow a remote user to teleoperate a PR2 robot, with tools for manipulation, perception, and navigation that have varying levels of autonomous assistance. It interfaces with the user via the rviz visualization engine. It is designed to make the task of picking up and placing an object without colliding with the environment as easy for the operator as possible.

This tutorial also includes use of the tools contained in pr2_interactive_object_detection, which allow you to perform tasks such as object segmentation and recognition. Once an object is segmented or recognized, it is often the case that it can be picked up autonomously. The results of the pr2_interactive_object_detection tools will automatically show up in the interactive manipulation controls, as described below.

The documentation on this page will show you how to use these tools to execute remote grasping and placing tasks on the PR2.

Installation

First install the pr2_interactive_manipulation suite of tools:

sudo apt-get install ros-groovy-pr2-interactive-manipulation

IMPORTANT: arm_navigation, on which pr2_interactive_manipulation is currently based, depends on a dry version of geometric_shapes contained in that stack. This package is incompatible with the wet version used by MoveIt!. If you have ros-groovy-geometric-shapes installed, you must do

sudo apt-get remove ros-groovy-geometric-shapes

in order for arm_navigation and thus pr2_interactive_manipulation to work.

Then set up the environment variables by sourcing this bash script:

source /opt/ros/groovy/setup.bash

That will set the environment variables in your current shell only; if you want them to be loaded every time you start a new shell, put that command in your ~/.bashrc.

You should now have the roslaunch binary in your path.

Starting the PR2 Interactive Manipulation Tool

You can either run the PR2 Interactive Manipulation tool using a simulated robot, or by teleoperating a real robot. You will need to start up two separate components: one on the robot or running the simulator, and another component to control the robot and direct it to grasp or move.

Simulated robot

You need to start up the Gazebo simulation tool which models the world your robot operates in.

roslaunch pr2_gazebo pr2_table_object.launch

This should cause a "Gazebo" window to open up, displaying a PR2 robot in front of a table. You should be able to click and drag on the screen to move the viewpoint around. This window shows you what is happening in the simulated world.

Next you should launch the IM tool, connected to the simulator:

export ROBOT=sim roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch sim:=true nav:=true

No window will pop up after you run this command. The parameters on the command line tell it to use the simulator (sim:=true) and that you want to be able to navigate (nav:=true).

When it's ready, the last message should read "Tabletop complete node ready".

Then launch the RViz GUI, which allows you to interact graphically with your robot:

roslaunch pr2_interactive_manipulation_frontend pr2_interactive_manipulation_desktop.launch sim:=true nav:=true

This will open an RViz window where you can control your robot.

Teleoperating a real robot

You will have to bring up the components of the PR2 Interactive Manipulation tool both on the robot you are teleoperating and on the desktop you are using.

On the robot

Start the following launch file:

roslaunch pr2_interactive_manipulation pr2_interactive_manipulation_robot.launch nav:=true

Note that the default is to use a Kinect mounted on the top of the robot's head, with frames starting with /openni. For a description of some common launch args to do things like adding base navigation, using the narrow stereo instead of a Kinect, or changing the Kinect frames used, see pr2_interactive_manipulation/launch_args.

On the desktop

Start the following launch file:

roslaunch pr2_interactive_manipulation_frontend pr2_interactive_manipulation_desktop.launch nav:=true

Note that this launch file will bring up rviz. The PR2 Interactive Manipulation tools are implemented as rviz plugins. The launch file above will instantiate all the rviz displays that you need. For example, the launch file above will also make sure rviz also contains all the display types from the pr2_interactive_object_detection package.

If you do not use the launch file above, you can also simply start rviz and then create the displays yourself.

Also on the desktop, you can optionally attach a PS3 joystick (not the one paired with the robot) directly via a USB cable. The launch files above will also bring up the standard PR2 joystick teleop node and make it listen to the joystick connected to your desktop.

Using the PR2 Interactive Manipulation Tool

Moving arms to the side/above/front

For starters, for most manipulation tasks we want the arms out of the way to start, so that we can have a clear view of what's going on. To get the arms to go off to the side, in rviz interact mode, right-click on the bicep of the arm you wish to move, and select either 'Safe move to ...' or 'Forced move to...', and then 'side', as shown below:

Safe move refers to trying to use the motion planners to move the arm; this is useful when the arm might hit something on its way to the side. If there is clearly nothing in the way of the arm getting to the side, selecting Forced move instead will send it to the side faster, but with no consideration of possible obstacles. (Forced move is also useful in times when the motion planners for whatever reason refuse to move the arm.)

You can also ask the arms to move to the 'above' or 'front' positions; experiment with those when the robot is in free-space to see what those positions look like. 'Front' is often useful for driving around while holding objects, although because the arms are not within the footprint of the robot, you should not use autonomous navigation while doing so.

Taking snapshots and positioning the base for manipulation

If you are trying to manipulate objects on a table, you typically want the base to be fairly close to the table, and in many cases, ideally, with the front edge of the base lined up with the edge of the table. To drive the base up to the table, it is usually good to take a snapshot with the stereo camera first, by right-clicking on the head and selecting 'Take Snapshot', as shown below:

The above picture also shows approximately what the scene looks like after you've used the manual/incremental base control arrows to drive up to the edge of the table, using your taken snapshot as a guide.

Moving the torso up/down

In real life, most tables are at a height where we need the torso to be all the way up in order to reach most objects. To move the torso up or down, use the arrows on the back of the PR2:

If you right-click either arrow, you can select 'All the way', which will cause the torso to continue all the way to the top or bottom position without you continuing to hold down the button. While it is moving all the way up or down, click the opposite arrow briefly to stop it in place.

In this simulation, however, the table happens to be fairly low, and so we can manipulate objects even with the torso down.

Grasping segmented objects

Now that we've driven up to the table and adjusted our torso height appropriately, we should take a new snapshot with the head (by either right-clicking on the head and selecting 'Take Snapshot' again, or by right-clicking on the point cloud and selecting 'Refresh'. This is because the robot's driving odometry is only so accurate, and so the old point cloud is likely to be not quite exactly in the right place relative to the robot.

Once we have a new snapshot, if we are dealing with well-separated objects on a tabletop, we can use our segmentation algorithms to find object clusters to pick up autonomously. Click 'Segment' in the Interactive Object Detection window. If segments are found, it will report how many in the status bar of that window, and the segments will appear as green clusters. To pick up a green cluster, right-click on the desired cluster and select 'Pick Up' and the appropriate arm to use:

If the cluster planner successfully finds a non-colliding/feasible grasp from the top or side of the object, the robot will go ahead and execute that grasp.

Grasping recognized objects

If the object happens to be one in the object database (in this simulation, the soda cans are recognizable objects; the mug is not), after the Segment step, instead of grasping a cluster, you can additionally hit the 'Recognize' button. If the robot recognizes one of the segmented clusters as an object in its object database, it will replace the green cluster with a green object mesh for that object. You can also right-click on recognized objects and ask the robot to pick them up:

The advantage of doing so is that the robot has more varied grasps for recognized objects stored in its database, so it may be able to pick up some objects that the cluster planner may fail on.

Manually specifying a grasp pose

For objects in clutter, or for which the autonomous grasp planners fail, you can manually specify a grasp to execute. To do so, in the Interactive Manipulation window, select the arm you wish to use in the bottom right, and then click the 'Grasp' button. A disembodied representation of the gripper (that will hopefully turn green in a moment) should show up at the current gripper pose for that arm, surrounded by rings and arrows:

This disembodied gripper control can be used to specify a desired final grasp pose for the arm. Move the controls around by left-clicking and dragging the arrows to move back and forth along each axis, and rotate them around the three axes by left-click-dragging the three rings.

If you have taken a snapshot of the scene, you can quickly warp the gripper control over to a point on that snapshot by right-clicking on any point in the point cloud and selecting 'Broadcast click position'. When you do so, the robot estimates the normal direction at that point in the point cloud, and uses that to initialize the direction from which the gripper will try to grasp that point. If you wish to grasp the object from the side, select a point on the side; if you wish to grasp from the top, select a point on top. Normals can be noisy, so if the gripper does not appear to come from the correct direction, you can try again with a different point. Below is an example of the initialized pose (coming from the top) for a point selected on the top of a can:

When you warp the gripper to a broadcast point or let go of the gripper controls, the robot will check that pose to see if it is feasible for grasping. What that means is that it will back up that pose by 10 cm along the gripper's approach direction (which is always gripper-x, along the fingertips), to what we call the 'pregrasp' pose. The pregrasp pose needs to be entirely collision-free, both in terms of the gripper and in terms of where the arm thinks it needs to be to place the gripper there (the inverse kinematics solution for that pose). At the actual grasp pose, the gripper itself is allowed to collide with the environment, because you may want to shove other objects aside when moving along the approach from the pregrasp to the grasp; however, the forearm and the rest of the arm still needs to have a feasible, collision-free set of joint angles that will place the gripper there. It will further check that the gripper can lift along the desired lift direction, which defaults to up/vertical (see the 'Advanced Options' section below to change the lift direction).

If the grasp and pregrasp checks are all fine, the gripper will turn green. If there is a problem (either pose is out of reach or there would be a collision), it will turn red. For instance, the pose below:

is out of reach because the arm would have to come from all the way opposite the robot, which is not possible.

When the gripper is in an acceptable pose for grasping, and turns green to indicate that the robot thinks the pose is fine, you can right-click on the green gripper and select 'Accept' to ask the robot to execute the grasp, as shown below:

If the 'Find alternate poses' box in the Advanced Options menu is checked (which it is by default; uncheck the box to disable this feature), and the current pose is not acceptable (red), the robot will automatically find alternate, nearby poses that are acceptable. This looks something like this:

The pose(1/8) text is an interactive button that, when clicked, will cycle through the feasible poses found, or you can click on one of the green boxes with arrows that represent individual gripper poses to select it.

If no boxes appear, that means that no feasible poses are found nearby. Usually this happens when you are trying to grasp something far out of reach; try pressing the 'Draw Reachable Zones' button in the Grasp and place tab of the Interactive Manipulation menu to draw circles around the robot's shoulders whose radius is the length of the robot's arm (to the wrist) (you will need to enable the Grasp Markers under Debug Displays). If the object in question is outside the circle when viewed overhead (the Camera Focus menu's Top button is useful for this), the robot will not be able to perform a top grasp on the object. (Side grasps can be performed on objects just barely outside the circle.)

For objects within reach, you can also ask the robot to provide grasp suggestions. After hitting the 'Grasp' button and then doing a 'Broadcast click position' on a point in the point cloud snapshot, you can right-click on the virtual gripper and select 'Run Grasp Planner' to ask the grasp planner to suggest possible gripper poses for grasping the objects seen in the point cloud from the top or front. This looks something like this:

Again, click on the pose(1/17) button or left-click one of the boxes to select an appropriate gripper pose to grasp an object in the scene. Green boxes represent feasible grasps; red boxes represent grasps returned by the planner that tested as unfeasible. If you wish to grasp an object for which there only exist red boxes, clicking on one of them will trigger alternatives-finding. For instance, clicking on one of the red poses corresponding to grasping the yellow bottle on the shelf above results in something like the following:

at which point you can select one of the feasible poses and see if it will suffice to grasp the object you want. You can also switch to a mode in which doing a 'Broadcast click position' on a point in the point cloud always runs the grasp planner, by going to the Advanced Options menu and checking 'Always plan grasps'.

In simulation, after the robot closes the gripper around an object, it is sometimes the case that the gripper controllers will continue to jitter because the simulation contacts are somewhat unstable; if this happens, the robot will not recognize that the grasp is complete. If the gripper closes on an object but does not lift it, and instead just sits there, you will have to lift the object manually using real-time gripper control, as described below. (If this happens, you would see a message that looks like "[ERROR] [1319857818.414735764, 239.056000000]: Hand posture controller timed out on goal" in the terminal window containing the robot manipulation launch.)

Real-time gripper control

Sometimes you want to do things besides just grasping; to move the gripper in real-time, left-click on the gripper's palm to activate real-time Cartesian control, as shown below:

Just as with the Grasp control, you can move the gripper around by left-click-dragging the rings and arrows. The difference here is that the gripper will move in real-time as you drag it around. Try to move smoothly and not too quickly; the controllers are set to allow fast movement, but when you move quickly the arm will tend to oscillate, and also Cartesian controllers are somewhat unpredictable, so the arm may not go where you expect. Left-click again on the gripper palm to make the Cartesian controls disappear.

Posture control

If the elbow is in a posture that you do not like (for instance, if the elbow is down and the forearm is hitting the table), you can adjust the elbow posture using the posture ring. Left-click on the bicep of the robot to pull up the posture control, as shown below:

SLOWLY rotate the red ring to attempt to move the elbow up or down; too-fast movement can sometimes result in the robot flailing wildly. Because posture control right now is actually only trying to control one joint angle (the shoulder roll joint) while simultaneously attempting to maintain the current pose of the gripper, there are some poses/arm angle configurations for which the shoulder roll joint cannot actually rotate. If that happens, move the gripper to a different pose and try again.

Opening and closing the gripper

At any point, if you want to open or close a gripper, right-click on the gripper palm and select 'Open Gripper' or 'Close Gripper', as shown below:

If you are trying to grasp an object, and you can't see what's going on through the robot's camera, you can often tell whether you successfully grasped by whether the gripper is closed all the way. For instance, this attempt at grasping a can failed, which I can tell because the gripper is closed:

If the grasp had succeeded, the gripper would still be partially open, since once you close, the gripper is attempting to close all the way; only the object prevents it from doing so.

After grasping, either successfully or not successfully, it is often good to move the arm back out of the way by asking it to perform a safe move to side, as shown below:

Once the arm is out of the way, you can take a new snapshot and see what the world looks like now.

Placing

Placing is much like Grasping; if you press the 'Place' button, you can manually specify a place pose to drop an object. If the robot thinks it has successfully grasped an object (which will not happen if the gripper controllers' jitter prevented a successful grasp+lift), the place control will additionally show either the recognized object mesh or the segmented object point cloud that the robot picked up, so that you can use it to visualize where it will go when placed.

The gripper will also turn red if the place pose is not feasible/in collision, and green if it is acceptable. Accepting a place pose will cause the robot to move to the pre-place location (which by default is above the place pose), then lower the object to the place location (and past, if reactive place is on; see Advanced Options below to change this), open the gripper, and retreat along the gripper-approach direction (in the direction of the fingertips/gripper-forward).

As with Grasp, if the gripper turns red and the 'Find alternate poses' box is checked in the Advanced Options menu (which it is by default), alternate place poses will be suggested, and you can select one by cycling through with the Poses button or by left-clicking on one of the green boxes-with-arrows.

Planned Moves

Planned Moves are like Grasping and Placing, except that the robot does not do any pre-grasp, pre-place, lift, or retreat. A pose selected using the Planned Move button needs to be out of collision entirely for the pose to turn green. Upon accepting a planned move pose, the robot will use the motion planners to plan a path to that pose.

Modeling a point cloud object

If you have used the Grasp button to pick up an object seen in the point cloud snapshot (that was not previously segmented or recognized), the robot will not know what it is holding. If you then try to move to side, the robot is likely to thwack the environment with the object held by the robot. To give the robot an idea of what it is holding, you can ask the robot to create a crude (bounding box) representation of the object that gets attached to the gripper for motion planning purposes. To do so, first you must manually move the object using the Cartesian rings-and-arrows control to a pose where it is not near any other objects, and where the robot can see enough of the object to get a decent bounding box from a single point cloud snapshot. Note that the Kinect has a minimum distance of about half a meter, so you have to keep the object a fair distance away from the head. Refresh the point cloud to make sure the robot can see all of two sides of the object, then click 'Model object' in the Collision tab of the Interactive Manipulation window:

If the object is too close to point cloud points that don't belong to the object in hand, you will see a brown box appear that is much too large. For instance:

If that happens, you must move the object farther away.

A proper model looks something like this:

The box shown is now attached to the gripper, and the robot can motion plan properly to place the object, or to move it around without hitting things in the world.

Resetting collision representations